Warehouse A has 1,000 units.

➤

Warehouse B has 800 units.

Real-world Applications ❘ 129

➤

Demand:

➤

Store 1 needs 500 units.

➤

Store 2 needs 900 units.

➤

Store 3 needs 400 units.

➤

(Total supply = 1,800, total demand = 1,800. They match.)

➤

Shipping costs (per unit):

➤

From Warehouse A to Store 1: $2

➤

From Warehouse A to Store 2: $4

➤

From Warehouse A to Store 3: $5

➤

From Warehouse B to Store 1: $3

➤

From Warehouse B to Store 2: $6

➤

From Warehouse B to Store 3: $3

The question is, how many units should you ship from each warehouse to each store to meet all demand, respect all supply limits, and do so at the minimum possible cost?

Step 2: Define Objective and Constraints

You now need to translate this business reality into the vectors and matrices that linprog understands. Listing 6-12 first defines the decision variables. There are six possible shipping routes (2 warehouses * 3 stores). You can flatten these into a single list of six variables: A->S1, A->S2, A->S3, B->S1, B->S2, and B->S3.

Next, you define the objective function. You want to minimize total cost, so you need to create a cost vector c containing the shipping prices for these six routes in order.

Finally, you define the constraints. In this problem, the constraints are equalities: the amount shipped from Warehouse A must exactly equal 1,000, and the amount received by Store 1 must exactly equal 500. Listing 6-12 sets this up using the A_eq matrix (defining which routes contribute to which constraint) and the b_eq vector (the actual supply/demand limits).

LISTING 6-12: SETTING UP THE TRANSPORTATION PROBLEM

import numpy as np

from scipy.optimize import linprog

# --- Step 1: Define the Problem ---

# Objective function (to be minimized):

# Cost = 2*x1 + 4*x2 + 5*x3 + 3*x4 + 6*x5 + 3*x6

c = [2, 4, 5, 3, 6, 3]

# Constraints (Equality): A_eq @ x = b_eq

130 ❘ CHAPTER 6 OPTimizATiOn TECHniquES fOR BuSinESS STRATEgy

# We have 5 constraints (2 supply, 3 demand)

# Variables: [x1, x2, x3, x4, x5, x6]

A_eq = [

[1, 1, 1, 0, 0, 0], # Wh. A Supply

[0, 0, 0, 1, 1, 1], # Wh. B Supply

[1, 0, 0, 1, 0, 0], # Store 1 Demand

[0, 1, 0, 0, 1, 0], # Store 2 Demand

[0, 0, 1, 0, 0, 1] # Store 3 Demand

]

b_eq = [

1000, # Wh. A Supply

800, # Wh. B Supply

500, # Store 1 Demand

900, # Store 2 Demand

400 # Store 3 Demand

]

# --- Define the Bounds ---

# We can't ship negative units

bounds = (0, None)

The final lines of Listing 6-12 set up logical limits on the variables. The only limit here is physical reality: you cannot ship a negative number of products.

Step 3: Optimizing

With the problem fully translated into c, A_eq, b_eq, and bounds, you can hand it off to the solver.

The method=‘highs’ is SciPy’s recommended modern solver for these types of problems. The code in Listing 6-13 runs the solver and displays the results.

LISTING 6-13: RUNNING THE SOLVER AND DISPLAYING THE RESULTS

# --- Step 3: Run the Optimizer ---

result = linprog(c, A_eq=A_eq, b_eq=b_eq, bounds=bounds, method='highs')

# --- Step 4: Interpret the Results ---

if result.success:

shipping_plan = result.x.reshape(2, 3) # Reshape 1x6 array into 2x3 matrix min_cost = result.fun

print("--- Optimal Shipping Plan (Units) ---")

print(f" Store 1 | Store 2 | Store 3")

print(f"Warehouse A: {shipping_plan[0, 0]:>7.0f} | {shipping_

plan[0, 1]:>7.0f} | {shipping_plan[0, 2]:>7.0f}")

print(f"Warehouse B: {shipping_plan[1, 0]:>7.0f} | {shipping_

plan[1, 1]:>7.0f} | {shipping_plan[1, 2]:>7.0f}")

print("\n---")

print(f"Total Minimized Shipping Cost: ${min_cost:.2f}")

else:

print("Optimization failed.")

print(result.message)

Real-world Applications ❘ 131

The optimizer has produced a clear, actionable plan. To minimize costs, Warehouse A should handle all of Store 1’s and Store 3’s demand, plus a small part of Store 2’s. Warehouse B should focus all its supply on fulfilling the rest of Store 2’s large demand. You see this in the results:

--- Optimal Shipping Plan (Units) ---

Store 1 | Store 2 | Store 3

Warehouse A: 100 | 900 | 0

Warehouse B: 400 | 0 | 400

---

Total Minimized Shipping Cost: $6200.00

Any other combination of shipments, while it might fulfill the demand, would result in a higher total cost. This is the kind of problem that saves large companies millions of dollars in logistics.

Integer Programming for Workforce Scheduling

So far, these optimization tools have served you well, but they share a common assumption: that the answers can be fractional. And for many problems, that’s perfectly fine. It makes sense to allocate 38.38% of a portfolio or to understand that the theoretical profit peak is at 714.29 units. You can simply round to the nearest whole number.

However, sometimes you are confronted with decisions where rounding is not just inaccurate, it’s not possible. Consider the following scenario.

Imagine you’re a manager. Your optimization model tells you to dispatch 2.5 trucks to a new location. How do you dispatch half a truck? Or a model for a factory expansion that returns an answer of 0.6, when the only options are Yes (1) or No (0). The most common version of this problem is workforce scheduling. You simply cannot schedule 0.7 of an employee to cover a shift.

These situations require a new, more specialized tool: integer programming (IP). This is a branch of optimization where some, or all, of the decision variables are restricted to being whole numbers.

Fortunately, the workhorse scipy.optimize.linprog has a parameter called integrality that allows you to solve exactly these kinds of puzzles.

Let’s step into the shoes of a call center manager. They have a classic, real-world scheduling puzzle.

They need to create a weekly staffing plan that meets the minimum number of employees required for each day, all while paying the lowest possible labor cost.

The core of the challenge lies in the shift structure. Each employee works for five consecutive days and then gets two days off. This means there are only seven possible “shift types” an employee can have (one starting on Monday, one on Tuesday, and so on). Each employee costs the company a flat $500 per week, regardless of which shift they are on.

The manager’s daily demand, however, is not flat. The call volume fluctuates, requiring a different number of employees each day: 17 on Monday, 13 on Tuesday, 15 on Wednesday, 19 on Thursday, 17 on Friday, 10 on Saturday, and only 8 on Sunday.

The question is, how many employees should be hired for each of the seven shift types to meet this fluctuating daily demand at the absolute minimum cost?

132 ❘ CHAPTER 6 OPTimizATiOn TECHniquES fOR BuSinESS STRATEgy Step 1: formulating the Problem

This is an integer programming problem. You can’t hire 2.5 people for the “Monday Start” shift. The answer must be a whole number.

First, you need to define the seven variables, which represent the seven decisions the manager has to make: x_mon (the number of employees starting on Monday), x_tue (starting on Tuesday), and so on, all the way to x_sun.

Second, you define the objective function. This is simple: you want to minimize the total cost. Since every employee costs $500, the function is Cost = 500*x_mon + 500*x_tue + ... + 500*x_sun.

Third, you build the constraints. This is the tricky part. You need to ensure that the number of people working on any given day is greater than or equal to that day’s demand. Let’s take Monday as an example. Who is on duty on a Monday?

➤

Employees who started their five-day shift on Monday (today).

➤

Employees who started on Sunday (day 2 of their shift).

➤

Employees who started on Saturday (day 3 of their shift).

➤

Employees who started on Friday (day 4 of their shift).

➤

Employees who started on Thursday (day 5 of their shift). Employees who started on Tuesday or Wednesday are on their days off.

So, the constraint for Monday becomes: x_mon + x_thu + x_fri + x_sat + x_sun >= 17

You then build a similar constraint for all seven days of the week, resulting in a system of seven inequalities. With this “blueprint” in hand, you can feed the problem to the solver. The resulting code is shown in Listing 6-14.

LISTING 6-14: INTEGER PROGRAMMING FOR WORK SCHEDULES

import numpy as np

from scipy.optimize import linprog

# --- Step 1: Define the Problem ---

# Objective function (Minimize Cost):

# Cost = 500*x1 + 500*x2 + ... + 500*x7

c = [500] * 7 # Cost is $500 for each of the 7 shift types

# Constraints (Left-hand side): A_ub @ x >= b_ub

# To make it "greater than", we multiply A and b by -1

# A_ub @ x <= b_ub ---> -A_ub @ x >= -b_ub

#

# Mon Tue Wed Thu Fri Sat Sun (Shifts Starting)

# Mon: 1 0 0 1 1 1 1 >= 17

# Tue: 1 1 0 0 1 1 1 >= 13

# Wed: 1 1 1 0 0 1 1 >= 15

# Thu: 1 1 1 1 0 0 1 >= 19

Real-world Applications ❘ 133

# Fri: 1 1 1 1 1 0 0 >= 17

# Sat: 0 1 1 1 1 1 0 >= 10

# Sun: 0 0 1 1 1 1 1 >= 8

A_ub = -np.array([

[1, 0, 0, 1, 1, 1, 1], # Mon

[1, 1, 0, 0, 1, 1, 1], # Tue

[1, 1, 1, 0, 0, 1, 1], # Wed

[1, 1, 1, 1, 0, 0, 1], # Thu

[1, 1, 1, 1, 1, 0, 0], # Fri

[0, 1, 1, 1, 1, 1, 0], # Sat

[0, 0, 1, 1, 1, 1, 1] # Sun

])

b_ub = -np.array([17, 13, 15, 19, 17, 10, 8]) # Daily minimums

# --- Step 2: Define Bounds and Integrality ---

bounds = (0, None) # Can't hire negative people

# Tell the solver all 7 variables must be integers

integrality = [1] * 7

# --- Step 3: Run the Optimizer ---

result = linprog(c, A_ub=A_ub, b_ub=b_ub, bounds=bounds, integrality=integrality, method='highs')

# --- Step 4: Interpret the Results ---

if result.success:

schedule = result.x

min_cost = result.fun

total_employees = np.sum(schedule)

days = ['Monday', 'Tuesday', 'Wednesday', 'Thursday', 'Friday', 'Saturday',

'Sunday']

print("--- Optimal Staffing Schedule ---")

print("Employees starting on:")

for i, num in enumerate(schedule):

print(f" {days[i]:<10}: {num:.0f}")

print("\n---")

print(f"Total Employees Hired: {total_employees:.0f}")

print(f"Total Minimized Weekly Cost: ${min_cost:.2f}")

else:

print("Optimization failed.")

print(result.message)

Step 2: interpreting the Results

The results of the solver are as follows:

--- Optimal Staffing Schedule ---

Employees starting on:

Monday : 6

Tuesday : 4

Wednesday : 0

Thursday : 7

134 ❘ CHAPTER 6 OPTimizATiOn TECHniquES fOR BuSinESS STRATEgy Friday : 0

Saturday : 0

Sunday : 2

--- Total Employees Hired: 19

Total Minimized Weekly Cost: $9500.00

This is a powerful, non-obvious solution. The solver determined that the most cost-effective way to meet the fluctuating daily demand is to hire a total of 19 employees, starting them on Monday, Tuesday, Thursday, and Sunday. No employees should start their shifts on Wednesday, Friday, or Saturday.

This plan meets all the minimum staffing requirements for each day at the absolute lowest possible cost. This type of integer programming is a fundamental tool for managers in operations, HR, and logistics.

SUMMARY

This chapter has been a journey, one that began with a single question from the last chapter: “how do you find the exact peak of the profit curve?” You’ve traveled from that one simple query to a complete framework for solving complex business problems. You’ve seen that every optimization problem, no matter how intimidating, can be broken down into two core components: an objective (what you want) and its constraints (the rules you must follow).

Using the scipy.optimize toolkit, you began by answering that initial question, finding the simple, unconstrained peak of the profit curve. From there, you moved into the world of real-world limits, using linear programming to solve a “product mix” problem where you discovered a surprising, non-obvious solution that maximized profit by focusing on a lower-margin product. You then explored the fundamental difference between unconstrained and constrained problems, visualizing the

“feasible region” that defines the boundaries of all real-world decisions.

With this foundation, you were ready to tackle truly complex nonlinear challenges, building an optimal financial portfolio and generating the efficient frontier to visualize the trade-off between risk and return. Your toolkit expanded again to solve tangible operations problems, including a complex supply chain logistics puzzle and an intricate integer programming problem for scheduling staff, where fractional answers simply weren’t an option. Finally, you brought all these concepts together by building a sophisticated pricing model that optimized for two interacting products.

You now have a proven framework for translating almost any business challenge, from finance to operations to marketing, into a solvable model. You have officially moved from analysis to prescription, and you are equipped with the tools to find not just a good solution, but the “best” one.

CONTINUE YOUR LEARNING

For readers who want to dive deeper into the powerful optimization tools discussed in this chapter, the following official resources are highly recommended:

Continue your Learning ❘ 135

➤

SciPy Optimization User Guide: The definitive guide to all solvers available in the library, including many advanced algorithms not covered here.

➤

Linear programming with SciPy: Detailed documentation on the linprog function, including advanced options for the “highs” solvers used in the examples.

➤

General minimization: In-depth details on the minimize function used for the portfolio examples, including the different algorithms available (like SLSQP and trust-constr).

➤

CVXPY: If you are interested in a more advanced, algebraic way to model complex convex optimization problems, CVXPY is the industry standard in Python.

7Probability and Statistics for

Business Analytics

In the previous chapter, the optimization models led to a powerful, precise answer: the optimal production quantity was 714.29 units, yielding a maximum profit of $10,714.29. But this answer was built on a critical assumption: that the inputs (cost, demand, etc.) were fixed, known numbers.

Business reality is much more complex. In that reality, the demand isn’t exactly 714, it’s around 714, and it could be 650 on a slow day or 800 during a sales rush. The variable costs aren’t exactly $50, they fluctuate. This chapter is about introducing the framework for making smart decisions in the face of this real-world uncertainty. It moves from the what-if analysis of the previous chapters to a what’s-likely analysis.

To do this, you will use a familiar and new set of Python libraries. You’ll use numpy.random to simulate this randomness, scipy.stats to run formal statistical tests, and statsmodels to build powerful models that explain why the numbers change.

THE PYTHON STATISTICS ECOSYSTEMS

Before diving into solving problems, it’s helpful to know what tools are available. Unlike optimization, which is dominated by scipy.optimize, the statistics landscape is a collaboration between several key libraries, each with a specific job:

➤

NumPy (numpy.random): NumPy’s core library provides the array structures, but its random submodule is the tool for creating data. You’ll see how to use it to simulate sales, model customer behavior, and generate the random inputs for the risk analysis.

➤

SciPy (scipy.stats): This is the primary statistical toolkit. Once you have data (either real or simulated), you use scipy.stats to analyze it. It’s packed with hundreds of functions for describing distributions, calculating confidence intervals, and, most importantly, running hypothesis tests like the t-test.

138 ❘ CHAPTER 7 PRoBABiliTy And STATiSTiCS foR BuSinESS AnAlyTiCS

➤

Statsmodels (statsmodels.api): To move beyond simple tests and find the relationship between variables (like how ad spend affects sales), you’ll use statsmodels. It’s famous for its ability to perform sophisticated linear regressions and produce detailed summary tables that help elucidate what’s driving your business.

➤

Pandas: This is the container that holds everything together. While not a statistics library itself, Pandas DataFrames are the standard way to load, clean, and organize the real-world data that you will feed into SciPy and Statsmodels.

NOTE These libraries are free and can be added using pip or conda.

The workflow in this chapter will generally follow this path: you will use numpy.random to simulate realistic data, scipy.stats to test it, and statsmodels to build predictive models from it.

While these packages serve as the foundation for probability and statistics, the landscape of statistical packages can be very broad depending on the problem you are trying to solve. We cannot cover all of the possible options in this chapter alone, so the “Continue Your Learning” section at the end of this chapter has more information on additional statistical libraries.

UNDERSTANDING RANDOM VARIABLES AND DISTRIBUTIONS

IN BUSINESS CONTEXTS

Before you can model uncertainty, you need a language to describe it. In statistics, we do this with random variables (a variable that can take on a range of values, not just one) and distributions (the shape of that randomness).

Imagine a random variable called daily sales. You know that tomorrow’s daily sales probably won’t be $10, and it probably won’t be $10 million, but it could be any number within a realistic range.

On Monday, daily sales are $480. On Tuesday, daily sales stop at $510, and it continues to $495 on Wednesday. Each day, it takes on a new, slightly different value based on all the random factors of the real world. Those factors that make daily sales move up and down, that’s what makes daily sales a random variable.

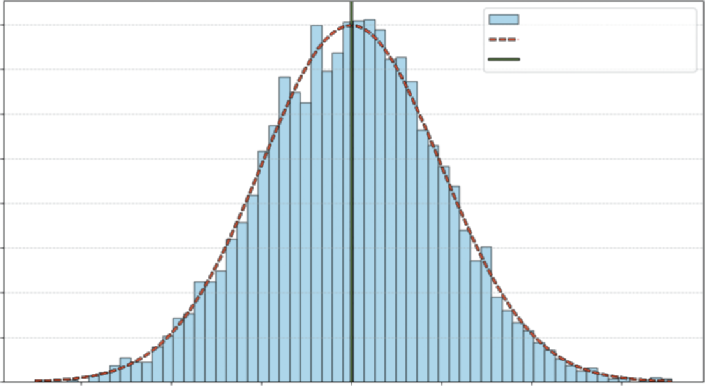

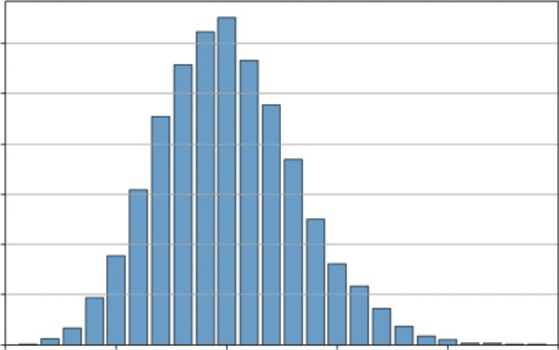

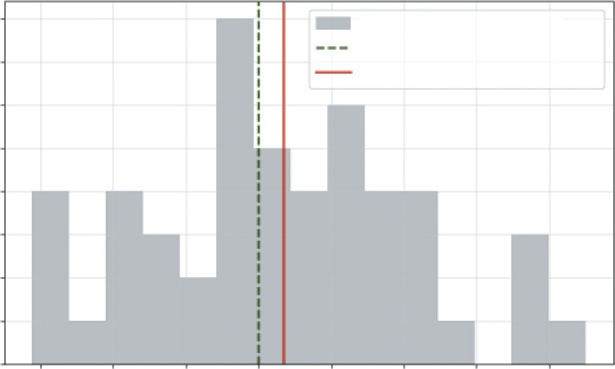

If you just looked at one or two days, you wouldn’t see a pattern. If you recorded and plotted this value for 1,000 days and grouped them together, you’d see a shape emerge such as the one shown

This shape is the distribution. You’d likely see a big pile of values clustered around the average and fewer and fewer values as you get farther out. This shape is the visual record of all the variable’s past movements. It also tells you the probability of where the variable might land next.

normal distribution, sometimes also referred to as a bell curve. It represents an empirical distribution because it uses real data to create the distribution.

Each bar represents the proportion of observations that are around that value, and the dashed line shows the general shape carted by the bars.

understanding Random Variables and distributions in Business Contexts ❘ 139

Daily Sales : Normal Distribution

0.008

Sample Data

PDF (µ = 500, σ = 50)

Mean: 500

0.007

0.006

vations 0.005

0.004

0.003

cent of ObsererP 0.002

0.001

0.000

350

400

450

500

550

600

650

Sales Amount

FIGURE 7-1: Distribution of daily sales.

You can see that this fits with what you generally know to be true in real life. Usually there is a baseline level of sales in business—sometimes that value goes up due to the holidays, and other times it goes down due to other events. The plot aligns with that reality, you can see that the daily sales observations around 500 shows up most frequently, and it is rare to see 400- or 600-dollar sales days.

Now that you have a general idea of what distributions and random variables look like, the next section explores how different data can create different distributions.

Discrete vs. Continuous Distributions

In business, we generally work with two key types of variables:

➤

Discrete variables: The variable can only take on specific, countable values. For example, a customer either converted (1) or did not convert (0). Another example is the number of items sold, which can be 1, 2, 3, and so on (you can’t sell 1.5 t-shirts, for example).

➤

Continuous variables: The variable can take on any value within a range. Examples include the exact time a user spends on your site (e.g., 12.534 seconds) or the exact amount of a sale.

This distinction between discrete and continuous variables is critical because it dictates how you model them. A discrete variable, like number of items sold, moves in distinct, whole-number steps (1, 2, 3). A continuous variable, like time spent on site, is measured, not counted, and can take on any fractional value (e.g., 12.51 or 12.52 seconds).

Once you’ve identified your variable’s type, the next logical step is to understand its behavior. Not all data fits the shape of the normal distribution. If you let a random variable (such as the number of items purchased) take on thousands of values, you would see a new distinct shape emerge.

140 ❘ CHAPTER 7 PRoBABiliTy And STATiSTiCS foR BuSinESS AnAlyTiCS

The Most Common Business Distributions

Now that you can classify your variables, you can explore the most common distributions used to model them. While there are dozens of statistical distributions, most business analysis will be driven by four key types. The key types discussed here are the normal distribution, binomial distribution, the uniform distribution, and the Poisson distribution. This section investigates the different distributions using NumPy and Matplotlib to plot their classic distributions.

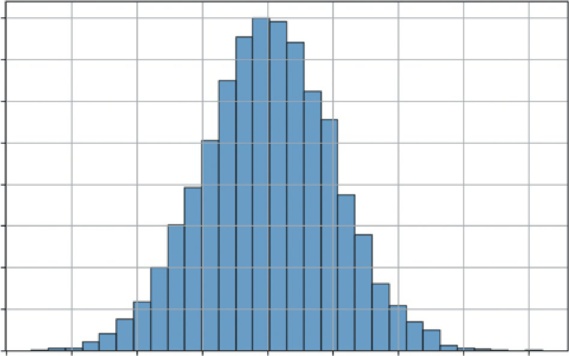

The normal distribution (the “Bell Curve”)

The normal distribution is the most important continuous distribution in statistics. It’s the default model for countless real-world phenomena that cluster around an average, from product sales to variations in manufacturing. It’s defined by its mean (the average, or center) and its standard deviation (spread).

Listing 7-1 generates and visualizes a standard normal distribution (with a mean of 0 and a standard deviation of 1) and shows a classic shape.

LISTING 7-1: VISUALIZING THE NORMAL DISTRIBUTION

import numpy as np

import matplotlib.pyplot as plt

# 1. Normal Distribution (Continuous)

mu, sigma = 0, 1 # mean and standard deviation

s = np.random.normal(mu, sigma, 10000)

# --- Visualization ---

plt.figure(figsize=(8, 5))

plt.hist(s, bins=30, density=True, edgecolor='black', alpha=0.7)

plt.title('Normal Distribution (Bell Curve)')

plt.xlabel('Value')

plt.ylabel('Probability Density')

plt.grid(True)

plt.show()

Listing 7.1 uses np.random.normal(mu, sigma, 10000) to generate 10,000 random numbers drawn from a normal distribution with a mean of 0 and a standard deviation of 1. It then uses the bins=30 argument to divide the range of data values into 30 equal-width intervals (or buckets).

This groups the continuous data points into discrete chunks to visualize the frequency distribution. It then uses plt.hist() to plot these numbers. The density=True argument normalizes the histogram, representing 100% of outcomes.

Most values are clustered around the mean (0), and the probability of seeing a value tapers off as you get farther away. This is the shape of many business metrics, as you’ll see in the next example.

understanding Random Variables and distributions in Business Contexts ❘ 141

Normal Distribution (Bell Curve)

0.40

0.35

0.30

y

0.25

y Densit 0.20

robabilit 0.15

P

0.10

0.05

0.00–4

–3

–2

–1

0

1

2

3

4

Value

FIGURE 7-2: The normal distribution.

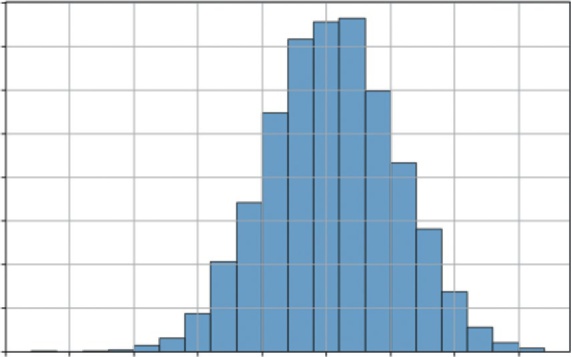

The Binomial distribution

The binomial distribution is the most important discrete distribution for modeling a sequence of trials. It helps you model the number of successes you’ll get in a set number of trials. It is defined by n (the number of trials) and p (the probability of success for each trial).

Let’s visualize a common scenario: if you flip a fair coin 100 times (n = 100, p = 0.5), what is the likely number of heads? Listing 7-2 simulates this 10,000 times to build a distribution.

LISTING 7-2: VISUALIZING THE BINOMIAL DISTRIBUTION

import numpy as np

import matplotlib.pyplot as plt

# 2. Binomial Distribution (Discrete)

n, p = 100, 0.5 # number of trials, probability of success

s = np.random.binomial(n, p, 10000)

# --- Visualization ---

plt.figure(figsize=(8, 5))

plt.hist(s, bins=20, density=True, edgecolor='black', alpha=0.7)

plt.title('Binomial Distribution (100 Coin Flips)')

plt.xlabel('Number of Heads (Successes)')

plt.ylabel('Probability')

plt.grid(True)

plt.show()

Listing 7-2 uses np.random.binomial(n, p, 10000). This function performs the 100 coin flips experiment 10,000 times and records the number of successes for each experiment. The resulting

142 ❘ CHAPTER 7 PRoBABiliTy And STATiSTiCS foR BuSinESS AnAlyTiCS

Binomial Distribution (100 Coin Flips)

0.08

0.07

0.06

y 0.05

0.04

robabilitP 0.03

0.02

0.01

0.0025

30

35

40

45

50

55

60

65

Number of Heads (Successes)

FIGURE 7-3: The binomial distribution.

Outside of coins, where does this distribution show up in the business context? Imagine that you sent a marketing email to 1,000 people (n = 1,000). You have a 3% click-through rate (p = 0.03). What’s the likely range of people who will click? This model would tell you how many clicks to expect (around 30) and the probability of a great day (50 clicks) and a terrible day (10 clicks). This is the statistical engine behind all A/B testing and conversion rate analysis.

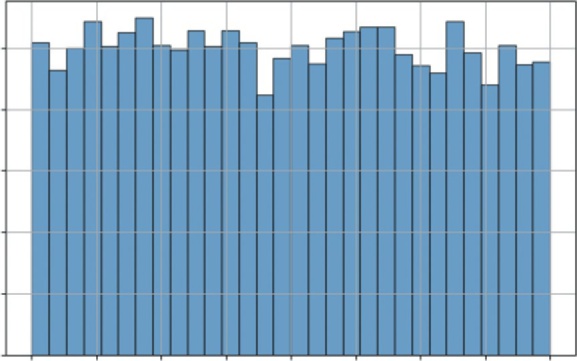

The uniform distribution

The uniform distribution is the simplest of all. It models a continuous situation where all outcomes in a given range are equally likely. It is defined by a low and high value.

Listing 7-3 illustrates a uniform distribution by using np.random.uniform(-1, 1, 10000) to generate 10,000 random numbers, where any value between −1 and 1 has an equal chance of being chosen.

LISTING 7-3: VISUALIZING THE UNIFORM DISTRIBUTION

import numpy as np

import matplotlib.pyplot as plt

# 3. Uniform Distribution (Continuous)

s = np.random.uniform(-1, 1, 10000)

# --- Visualization ---

plt.figure(figsize=(8, 5))

plt.hist(s, bins=30, density=True, edgecolor='black', alpha=0.7)

plt.title('Uniform Distribution (Equal Chance)')

plt.xlabel('Value')

understanding Random Variables and distributions in Business Contexts ❘ 143

plt.ylabel('Probability Density')

plt.grid(True)

plt.show()

the histogram is flat. This is the shape of pure, unbiased randomness within a defined range. You can see this type of distribution in business as well. Imagine that your supplier says the delivery will arrive in 5–10 days. You have no other information. When modeling this in a simulation, you would use a uniform distribution (low = 5, high = 10). It’s the most unbiased way to model uncertainty when you only know the minimum and maximum possible values.

The Poisson distribution

The Poisson distribution is another essential discrete distribution. Instead of modeling successes in n trials like the binomial, Poisson models the number of events that occur in a fixed interval of time or space. It is defined by a single parameter, lam (lambda), which is the average number of events in that interval.

This is the perfect model for your number of products sold. Let’s say a small e-commerce site averages 10 sales per hour. You want to simulate the number of sales they might get in any given hour. You can set up this model in Listing 7-4.

LISTING 7-4: VISUALIZING THE POISSON DISTRIBUTION

import numpy as np

import matplotlib.pyplot as plt

# 4. Poisson Distribution (Discrete)

avg_events_per_hour = 10

s = np.random.poisson(avg_events_per_hour, 10000)

# --- Visualization ---

plt.figure(figsize=(8, 5))

# We can use np.bincount to get the frequency of each integer

counts = np.bincount(s)

plt.bar(range(len(counts)), counts, align='center', edgecolor='black', alpha=0.7) plt.title(f'Poisson Distribution (Avg = {avg_events_per_hour} events/hour)') plt.xlabel('Number of Sales in One Hour')

plt.ylabel('Frequency (out of 10,000 simulations)')

plt.grid(axis='y')

plt.xlim([0, 25]) # Truncate x-axis for readability

plt.show()

This example uses np.random.poisson(avg_events_per_hour, 10000). This simulates 10,000

different hours and records the number of sales (events) that occurred in each. Instead of plt.hist, it uses plt.bar with np.bincount to create a clean bar chart, which is more appropriate for integer data like this.

the most likely outcome is the average of 10 sales. However, the distribution is not symmetrical (it’s “skewed right”). This model shows that it’s common to have 8 or 12

sales, but very rare to have 20, and impossible to have negative sales.

144 ❘ CHAPTER 7 PRoBABiliTy And STATiSTiCS foR BuSinESS AnAlyTiCS

Uniform Distribution (Equal Chance)

0.5

y 0.4

y Densit 0.3

robabilitP 0.2

0.1

0.0 –1.00 –0.75 –0.50 –0.25 0.00 0.25 0.50 0.75

1.00

Value

FIGURE 7-4: The uniform distribution.

Poisson Distribution (Avg = 10 events/hour)

1200

ulations) 1000

800

0,000 sim

600

y (out of 1 400

200

Frequenc

0 0

5

10

15

20

25

Number of Sales in One Hour

FIGURE 7-5: A histogram of simulated hourly sales.

HYPOTHESIS TESTING

Now that you can describe data with distributions, how do you make a decision with it or determine if a change makes a difference? This is the domain of hypothesis testing, the rigorous framework for answering the question: Did my change have a real effect, or did I just get lucky?

Hypothesis Testing ❘ 145

Imagine a classic business scenario: You run an A/B test on your website.

➤

Version A (Control): The current design.

➤

Version B (Test): A new design with a bigger Buy Now button.

You run the test for a week. The results come in: Version A had a 10% conversion rate, and Version B had a 12% conversion rate. It looks like Version B is the winner. But is it? Or is that 2% difference just random noise, the same way flipping a coin 10 times might give you six heads one day and four the next? You need a way to separate the signal from the noise. That is where hypothesis testing comes in.

Hypothesis testing works by setting up a debate between two competing viewpoints:

➤

The null hypothesis assumes that nothing interesting happened. In this example, the null hypothesis is: There is no real difference between Version A and Version B. Any difference you see is just random luck. In science and business, we always assume the null hypothesis is right until proven otherwise.

➤

The alternative hypothesis is what we are trying to prove. In this example, it is: There is a real difference; Version B is statistically better than Version A.

To settle the debate of which hypothesis is correct, you use a test statistic. This calculation effectively measures how far your observed data is from what the null hypothesis predicted. The two most common types are as follows:

➤

z-value (z-statistic): This measures how many standard deviations your result is from the mean. It is typically used when you have a large sample size (usually over 30) and you know the population’s variance.

➤

t-value (t-statistic): This is similar to the z-value but is adjusted for smaller sample sizes or when the population variance is unknown. It is slightly more conservative, accounting for the higher uncertainty in small datasets.

The decision to reject or fail to reject the null hypothesis using t-statistics (or z-statistics) and p-values is a two-step process that is logically equivalent but approaches the problem from different angles.

Test Statistics

Think of the test statistic as a standardized measure of how far your sample result is from what you expected under the null hypothesis. We use test statistics to make decisions. Another way you can think about them is we are projecting our data onto a typical distribution. When we make a decision, we are just looking at how abnormal our result is relative to the typical distribution. You can determine if your null hypothesis is incorrect by following a simple three-step process.

Step 1: Set a Critical Value

Before running the test, you decide on a critical region based on your confidence level (usually 95%).

For a standard two-tailed test, this critical value is often around 90% (for z-scores).

146 ❘ CHAPTER 7 PRoBABiliTy And STATiSTiCS foR BuSinESS AnAlyTiCS

Step 2: Calculate the Stat

You calculate a t-score (for smaller samples) or z-score (for large samples). This number represents how many standard deviations your result is away from the null hypothesis mean.

Step 3: Make a decision

Using your calculations, make a decision based on the following:

➤

If your calculated statistic is more extreme than the critical value (e.g., 2.5 > 1.96), your result falls into the “rejection region.” You reject the null hypothesis.

➤

If it is less extreme, you fail to reject the null hypothesis.

Notice that we never say we accept the null hypothesis. This is a subtle but critical distinction in statistics. Failing to reject the null hypothesis doesn’t mean you’ve proven it’s true; it simply means that you haven’t found enough evidence to prove it’s false. Think of it like a court trial: a defendant is found not guilty, never innocent. You assume the null is true until the evidence (the data) forces you to abandon it. If the p-value is high, the evidence was just too weak to convict.

The p-value

While test statistics are a powerful tool, calculating them is only half the battle. To make a final decision, you traditionally have to look up a “critical value” in a statistical table, which can feel like an extra, cumbersome step. This is where the p-value comes in. It simplifies the entire process by converting your test statistic into a single, intuitive probability.

Think of the p-value as a direct translation of your t-score or z-score into a language of likelihood.

Instead of asking, “Is my score of 2.5 greater than the critical value of 1.96?” the p-value answers a more direct question: If the skeptic were right and there was truly no effect, what are the odds I would see data this extreme just by random luck?

This connection is mathematically precise. A high-test statistic (representing a large difference from the norm) will always result in a low p-value (representing a low probability of luck). This means you can skip the lookup tables entirely. You simply compare your p-value directly to your risk tolerance. For most research studies, for example pharmaceutical trials, the comparison p-value is 0.05. A simple rule of thumb is as follows:

➤

If p < 0.05: The probability of this being luck is so low that you reject the skeptic’s view.

(This is the same as your test statistic falling into the rejection region.)

➤

If p > 0.05: The probability of luck is reasonably high, so you cannot rule it out. You fail to reject the null hypothesis.

By focusing on the p-value, you get the same rigorous conclusion as the t-stat or z-stat method, but with a number that is much easier to interpret and explain to stakeholders.

Hypothesis Testing ❘ 147

The A/B Test

This section simulates a problem where you can use hypothesis testing. For this, you will simulate an A/B test. You’ll create two datasets representing conversion rates for Website A and Website B. The data will be slightly skewed toward Website B, and then you’ll use scipy.stats to see if a t-test can detect this difference. Even though you are not working with real data and you know the data ahead of time, you can see how this might be the type of problem businesses could face on any given day.

Listing 7-5 uses np.random.binomial to simulate the user behavior by creating two datasets of 1,000 website visitors each (n_samples). For Website A, we simulate conversions using a binomial distribution with a 10% success rate, while for Website B, we use a 12% rate. This step allows you to generate realistic, messy data where you know the “ground truth” (that B is better) so you can test if your statistical tools can successfully detect it.

LISTING 7-5: RUNNING A T-TEST ON A/B DATA

import numpy as np

from scipy import stats

# 1. Simulate our data

# We use the Binomial distribution (1=converted, 0=not)

# We simulate 1,000 visitors for each version

n_samples = 1000

# Website A has a true rate of 10%

conversions_a = np.random.binomial(1, 0.10, n_samples)

# Website B has a true rate of 15%

conversions_b = np.random.binomial(1, 0.15, n_samples)

# 2. Calculate the observed conversion rates

rate_a = np.mean(conversions_a)

rate_b = np.mean(conversions_b)

print(f"Observed Conversion Rate A: {rate_a:.1%}")

print(f"Observed Conversion Rate B: {rate_b:.1%}")

# 3. Run the Hypothesis Test (t-test)

# We use 'ttest_ind' because we are comparing two INDEPENDENT groups.

# We set equal_var=False (Welch's t-test) because in the real world,

# we can rarely assume two groups have the exact same variance.

t_stat, p_value = stats.ttest_ind(conversions_a, conversions_b, equal_var=False) print(f"\nP-Value: {p_value:.4f}")

# 4. Interpret the result

if p_value < 0.05:

print("Result: Statistically Significant! (Reject the Null Hypothesis)") print("We are confident the difference is real.")

else:

print("Result: Not Significant. (Cannot Reject the Null Hypothesis)") print("The difference could just be random noise.")

148 ❘ CHAPTER 7 PRoBABiliTy And STATiSTiCS foR BuSinESS AnAlyTiCS

After setting up a simulation of data, we calculate the observed conversion rates by taking the mean of each dataset. This mimics the real-world dashboard view a manager would see, showing the actual percentage of simulated visitors who converted in this specific experiment, which will likely hover around, but not exactly match, the 10% and 15% settings due to randomness.

Running the code in Listing 7-5 will produce the following results:

Observed Conversion Rate A: 10.3%

Observed Conversion Rate B: 15.0%

P-Value: 0.0016

Result: Statistically Significant! (Reject the Null Hypothesis)

We are confident the difference is real.

The core of the analysis happens when we run the hypothesis test. We use stats.ttest_ind to compare the two independent groups of visitors. Crucially, we set equal_var=False to perform Welch’s t-test, a robust method that does not assume both groups have the same variance, which is a safer and more professional assumption for real business data. This function calculates the t-statistic and, most importantly, the p-value. Finally, we interpret this p-value using a standard 5% significance threshold (0.05). If the p-value is below 0.05, the code prints that the result is statistically significant, meaning the probability of seeing this difference by sheer luck is so low (less than 5%) that we reject the null hypothesis and conclude the lift is real. If it’s above 0.05, we report that the result is not significant, meaning we cannot rule out the possibility that the difference is just random noise.

From the results, you can see that the p-value is 0.0016, meaning that Website A and Website B are not the same. Looking at the means again, you can see that Website B clearly has a superior conversion rate.

Confidence Intervals: The Other Side of the Coin

While the p-value provides a binary answer, Yes or No, business leaders usually require more nuance.

A simple, Version B is better, is often insufficient for making high-stakes decisions. Stakeholders typically ask a different, more quantitative question: How much better is it?

To answer this, you need to move beyond simple hypothesis testing to calculating a confidence interval. Instead of a single point estimate, a confidence interval provides a range of values, giving context to your findings by quantifying the uncertainty. Rather than just reporting that Version B has a 12%

conversion rate, you can say that you are 95% confident that the true conversion rate for Version B lies between 10.5% and 13.5%. This range is far more valuable for risk assessment and ROI calculation.

You can calculate this interval using scipy.stats. Listing 7-6 defines the desired confidence level (typically 95%) and uses the standard error of the mean (SEM) to construct the interval around the sample mean. The stats.t.interval function handles the heavy lifting, using the t-distribution to determine the appropriate width of the interval based on the sample size and variability.

linear Regression ❘ 149

LISTING 7-6: CALCULATING A CONFIDENCE INTERVAL

confidence_level = 0.95

degrees_freedom = n_samples - 1

sample_mean = np.mean(conversions_b)

# Calculate the Standard Error of the Mean (SEM)

sample_standard_error = stats.sem(conversions_b)

# Create the interval based on the t-distribution

confidence_interval = stats.t.interval(confidence_level, degrees_freedom, sample_

mean, sample_standard_error)

print(f"95% Confidence Interval for Website B: {confidence_interval[0]:.1%} to

{confidence_interval[1]:.1%}")

The result of running the code in Listing 7-6 is as follows:

95% Confidence Interval for Website B: 12.8% to 17.2%

This output gives managers a realistic range of expectations. If the interval was wide, say 8–16%, it would indicate a high degree of uncertainty, suggesting that you might need more data before making a decision. Conversely, a narrow interval like 11.8–12.2% indicates high precision. This context is essential for calculating the ROI of any business decision, allowing leaders to prepare for both the best-case and worst-case scenarios.

LINEAR REGRESSION

So far, you’ve seen how to used statistics to describe uncertainty with distributions and to make yes/

no decisions with hypothesis tests. You’ve answered questions like: Is Version B better than Version A? But you haven’t answered a much more powerful question: Why?

In a real business, sales don’t just change randomly. They are driven by forces we can control: ad spend, pricing, promotions, and more. The final step in your core statistics toolkit is moving from simple comparison to explanation and prediction.

This is the job of regression analysis. Instead of just asking if a change had an effect, you build a model to quantify that effect. You want to answer the most common and valuable questions in business:

➤

How much does ad spend really affect sales?

➤

If you spend $1 more on marketing, how many dollars in sales will you get back?

➤

How sensitive are your customers to price? If you raise the price by $1, how many sales will you lose?

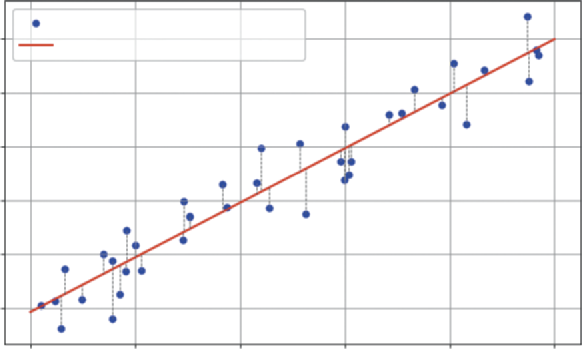

To do this, you’ll use a powerful tool called multivariate linear regression. Think of the simple trend line we created in the last section:

Sales = B 0 + B 1 ( Time)

150 ❘ CHAPTER 7 PRoBABiliTy And STATiSTiCS foR BuSinESS AnAlyTiCS

That was a good start, but it’s a simple model. Time isn’t a business strategy. It doesn’t cause sales to go up; it’s just a placeholder for the real, underlying drivers of growth. Now, we are going to build a much smarter model by replacing the generic Time variable with the actual levers managers can pull: ad spend and price. You can build a model that looks like this:

Sales = B 0 + B 1 ∗ ( AdSpend ) + B 2 ∗ ( Price) This equation is the core of the analysis. B 0 is the intercept, representing baseline sales if you spent $0

on ads and your price was $0. The B 1 and B 2 terms are the coefficients, and they are the treasure you are looking for. B 1 will tell you the exact dollar-for-dollar power of your advertising, and B 2 will tell you the precise impact of your price on customer demand.

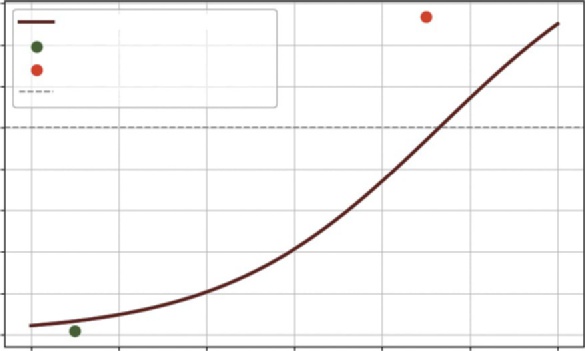

The computer finds these perfect coefficients using a method called ordinary least squares (OLS).

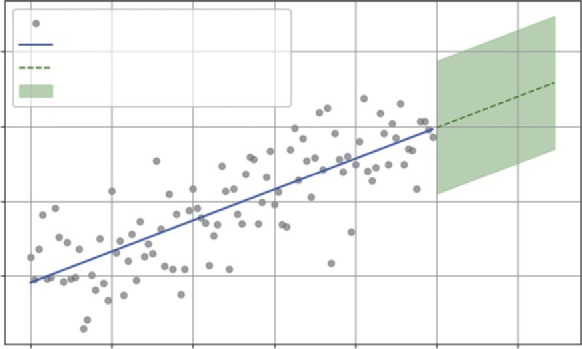

Imagine a scatterplot of all your data, a cloud of points in space, where each point’s position is defined by its ad spend, price, and sales. OLS is a mathematical process that finds the single best-fit plane (think of it as a flat sheet of paper) that slices through that cloud of data. This visualization

Best fit means it finds the plane that has the smallest possible total error. The error (also called a residual) is the vertical distance between each individual data point and the model’s plane. In

the error is the distance between the point and the solid line, highlighted by a dotted line.

OLS calculates this distance for all 100 points, squares them, and then adds them all up. Then, using the optimization methods from the previous chapter, it finds the one unique plane that makes this total sum of squared errors as small as possible. Because the method is linear, it can also be solved algebraically. how ad spend explains sales), but you are not limited to one explanatory variable. As the number of variables grow, so too does the complexity of optimization.

Visualizing Ordinary Least Squares (OLS)

Actual Sales Data

300

Fitted Regression Line (OLS)

250

200

ales (Y)S 150

100

50

0

20

40

60

80

100

Ad Spend (X)

FIGURE 7-6: Linear regression with one variable.

linear Regression ❘ 151

The good news is that you don’t have to set up the optimization problem each time you want to use this powerful tool. For this job, you’ll use the statsmodels library, the heavy-duty modeling tool introduced earlier in the chapter. You simply give it your y (sales) and your x (ad spend, price), and its OLS.fit() function does all this work for you, handing back the perfectly calculated coefficients.

The next section explains regression in more detail using some examples.

Analyzing Marketing Effectiveness

Let’s simulate 100 days of data for a product. We will create data for ad_spend (how much we spent each day), price (what we charged each day), and sales (the resulting sales for that day). We will intentionally build a relationship that will resolve to true into our data (e.g., sales = 100 + 2.5 *

ad_spend - 7 * price + noise), and then we’ll see if the regression model can find it. The example code is shown in Listing 7-7.

LISTING 7-7: RUNNING A MULTIVARIATE REGRESSION WITH STATSMODELS

import numpy as np

import statsmodels.api as sm

# 1. Generate realistic mock data

np.random.seed(42)

num_days = 100

# Ad spend: Varies between $50 and $150

ad_spend = np.random.uniform(50, 150, num_days)

# Price: Varies between $19.99 and $24.99

price = np.random.uniform(19.99, 24.99, num_days)

# Sales: Base 100 + 2.5 * ad_spend - 7 * price + random noise

sales = 100 + (2.5 * ad_spend) - (7 * price) + np.random.normal(0, 10, num_days)

# 2. Prepare the data for Statsmodels

# We are modeling: sales (y) ~ ad_spend (x1) + price (x2)

y = sales # Our 'dependent' variable

# Create our X matrix (the 'independent' variables)

X = np.column_stack((ad_spend, price))

# statsmodels requires us to manually add the 'intercept' (B0)

X = sm.add_constant(X)

# 3. Fit the model

# OLS = Ordinary Least Squares (the standard regression method)

model = sm.OLS(y, X).fit()

# 4. Print the full summary table

print(model.summary())

Let’s walk through what the code in Listing 7-7 is doing. In Step 1, we generate our mock data.

ad_spend and price are our independent variables, the things we control. sales is our dependent variable, the outcome we want to measure. We’ve built in a true relationship, but also added np.random.normal to represent real-world randomness or noise. This function draws random

152 ❘ CHAPTER 7 PRoBABiliTy And STATiSTiCS foR BuSinESS AnAlyTiCS

values from a bell curve centered at 0 with a standard deviation of 10. The 0 mean ensures we don’t artificially bias the sales figures up or down on average, while the standard deviation mimics natural volatility. This technique is central to modern synthetic data strategies; by statistically mirroring real-world variance without using actual records, companies can train models safely while strictly adhering to user privacy standards.

In Step 2, we prepare the data. statsmodels requires our inputs in a specific format: y as a single vector of outcomes (sales), and X as a matrix of all our “input” variables (ad_spend and price).

The line X = sm.add_constant(X) is critical. This adds a column of 1s to the X matrix. This is the mathematical step that allows the model to solve for our baseline intercept ( B 0), or the sales we would make if all other variables were 0.

In Step 3, we fit the model. We use sm.OLS(y, X), which stands for ordinary least squares. This is the standard, classic method for regression. Its job is to look at all 100 data points and find the single best line (or in this case, a 3D plane) that fits the data, minimizing the total error. The fit() command runs the calculation.

Finally, in Step 4, we call model.summary(). This prints the comprehensive results table, which is the standard for professional statistical analysis.

Running this code produces the following table:

OLS Regression Results

==============================================================================

Dep. Variable: y R-squared: 0.957Model: OLS Adj. R-squared: 0.956

Method: Least Squares F-statistic: 1073.

Date: Sat, 15 Nov 2025 Prob (F-statistic): 2.10e-67

Time: 14:01:00 Log-Likelihood: -344.86

No. Observations: 100 AIC: 695.7

Df Residuals: 97 BIC: 703.5

Df Model: 2

Covariance Type: nonrobust

==============================================================================

coef std err t P>|t| [0.025 0.975]------------------------------------------------------------------------------

const 94.6291 19.333 4.895 0.000 56.262 132.997

x1 2.5283 0.063 39.995 0.000 2.403 2.654

x2 -6.7900 0.852 -7.966 0.000 -8.481 -5.099

==============================================================================

This table is dense, but for a business analyst, you only need to know how to read three key parts.The first thing to check is the R-squared (top right), which is 0.957. This is the model’s “explanatory power,” and a value this high means our model (ad spend and price) successfully explains 95.7% of all the variation in our daily sales, making it an incredibly strong and reliable model.

The next, and most important, part is the coefficients, which answer our why question. The x1

(ad_spend) coefficient of 2.5283 is our marketing ROI: it means for every $1 we spend on ads, we can expect to sell 2.53 more units, holding price constant. (Notice how close the model got to the

“true” 2.5 we built into the data.) Similarly, the x2 (price) coefficient of −6.7900 quantifies our customer’s price sensitivity: a $1 price increase is predicted to lose 6.79 units in sales, holding ad

linear Regression ❘ 153

spend constant. (Again, this is very close to our “true” value of −7.) The const of 94.6291 is our baseline, representing the predicted sales if we spent $0 on ads and set the price to $0.

Finally, we must check the P>|t| (p-values) to ensure that these coefficients aren’t just random noise.

This connects directly to our hypothesis testing. For both x1 and x2, the p-value is 0.000, meaning the probability of seeing such strong relationships just by luck is essentially zero. We can be extremely confident that both ad spend and price have a real, statistically significant impact on sales.

With this one analysis, we have moved from simple forecasting to deep business intelligence. We’ve built a model that not only predicts sales but also explains what drives them, allowing us to make far smarter decisions about our marketing budgets and pricing strategies.

Explaining Financial Risk Factors

The same regression technique used for marketing is a cornerstone of finance, particularly in assess-ing risk. Let’s say a bank wants to understand the drivers of a person’s credit score. They believe two of the most important factors are the person’s annual income and their debt-to-income ratio (the percentage of their monthly income that goes to paying debts).

You can build a model to quantify this:

CreditScore = B 0 + B 1 ∗ ( Income ) + B 2 ∗ ( DebtRatio) The goal in Listing 7-8 is to find the coefficients. B 1 will tell you how much a higher income helps the score, while B 2 will tell you how much a high debt ratio hurts it.

LISTING 7-8: RUNNING A MULTIVARIATE REGRESSION FOR CREDIT RISK

import numpy as np

import statsmodels.api as sm

# 1. Generate realistic mock data for 100 loan applicants

np.random.seed(123) # Use a different seed

num_applicants = 100

# Annual income: Varies between $30,000 and $150,000

income = np.random.uniform(30000, 150000, num_applicants)

# Debt-to-Income Ratio: Varies between 10% (0.1) and 60% (0.6)

debt_ratio = np.random.uniform(0.1, 0.6, num_applicants)

# "True" Model: Base score 400 + $30 per $10k income - 200 * debt_ratio + noise

# (Note: 0.003 * 10000 = 30)

noise = np.random.normal(0, 20, num_applicants)

credit_score = 400 + (0.003 * income) - (200 * debt_ratio) + noise

# 2. Prepare the data for Statsmodels

y = credit_score # Our 'dependent' variable

# Create our X matrix (the 'independent' variables)

154 ❘ CHAPTER 7 PRoBABiliTy And STATiSTiCS foR BuSinESS AnAlyTiCS

X = np.column_stack((income, debt_ratio))

# Add the 'intercept' (B0)

X = sm.add_constant(X)

# 3. Fit the model

model = sm.OLS(y, X).fit()

# 4. Print the full summary table

print(model.summary())

In Step 1 of this code, we generate our mock data for 100 loan applicants. income and debt_ratio are our independent variables. credit_score is our dependent variable. We’ve built in a true relationship: a base score of 400, a positive effect from income, a negative effect from debt, and some random noise to make it realistic.

In Step 2, we prepare the data in the format that statsmodels requires: y as the vector of credit scores and X as the matrix containing our input variables. The line X = sm.add_constant(X) is the crucial step that adds a column of 1s to allow the model to solve for the baseline intercept ( B 0).

In Step 3, we fit the OLS model. The fit() command runs the algorithm to find the best-fit plane that minimizes the sum of squared errors between our 100 data points and the model’s predictions.

Finally, in Step 4, we call model.summary() to print the comprehensive results table.

Running this code produces the following table:

OLS Regression Results

==============================================================================

Dep. Variable: y R-squared: 0.852Model: OLS Adj. R-squared: 0.849

Method: Least Squares F-statistic: 279.4

Date: Sat, 15 Nov 2025 Prob (F-statistic): 2.55e-40

Time: 14:30:00 Log-Likelihood: -429.35

No. Observations: 100 AIC: 864.7

Df Residuals: 97 BIC: 872.5

Df Model: 2

Covariance Type: nonrobust

==============================================================================

coef std err t P>|t| [0.025 0.975]------------------------------------------------------------------------------

const 403.6534 10.667 37.842 0.000 382.483 424.824

x1 0.0029 0.000 19.988 0.000 0.003 0.003

x2 -198.8121 13.561 -14.661 0.000 -225.725 -171.899

==============================================================================

Again, this table gives us practical, actionable insights. The first number to check is the R-squared (top right), which at 0.852 tells us the explanatory power of our model. This means our two variables, income and debt ratio, successfully explain 85.2% of the variation in credit scores, confirming we have a strong and reliable model. The coef (coefficients) provide the core of our analysis.The const of 403.6534 is our baseline, predicting a score of ~404 for an individual with zero income and zero debt. The x1 (income) coefficient of 0.0029 is best read as: For every $10,000 in

linear Regression ❘ 155

additional annual income, the credit score is predicted to increase by 29 points, holding debt constant. Conversely, the x2 (debt_ratio) coefficient of −198.8121 quantifies risk: For every 10% point increase in the debt-to-income ratio (e.g., from 0.2 to 0.3), the credit score is predicted to decrease by 19.88 points, holding income constant. Finally, the P>|t| (p-values) confirm that our findings are real. Since the p-values for both x1 and x2 are 0.000, we know the positive relationship with income and the negative relationship with debt are both highly statistically significant and not just a random fluke.

With this one analysis, the bank can move beyond simple intuition. Rather than trying to rebuild the wheel, this kind of quantitative model allows the bank to enhance existing credit scoring systems with additional data and correlations. This provides a more nuanced view of why specific applicants are riskier than others, supplementing industry standards with custom insights.

Other Considerations

In this chapter, you have moved from simple forecasting to deep business intelligence. You’ve seen how to build a model that not only predicts sales but also explains what drives them, allowing you to make far smarter decisions about marketing budgets and pricing strategies. However, as powerful as regression is, it is also one of the most misused tools in analytics. Before you apply it to your own problems, it’s critical to understand its limitations and common pitfalls.

The most important rule to remember is this: correlation does not imply causation. Our model found a statistically significant link between ad spend and sales, but it did not prove that ad spend causes sales. It’s possible that a third, unmeasured lurking variable is at play. For example, maybe the business runs its biggest ad campaigns during the holiday season, which is also when their sales naturally increase. The model can’t tell the difference; it only sees that ads and sales go up together. As an analyst, your job is to use your business expertise to judge whether the model’s mathematical associa-tion represents a true causal link. Often, the best way to confirm this is to use regression as a starting point and then run controlled A/B tests to isolate specific variables and prove the cause-and-effect relationship. In an A/B test, you randomly split your audience into two groups while changing only one variable for the test group while keeping everything else constant for the control group. A/B tests allow you to pinpoint the specific impact of a change by observing the two groups.

You must also be wary of multicollinearity, which is a common trap that occurs when your independent variables (your X matrix) are not truly independent, but highly correlated with each other.

For example, if you tried to model sales using both ad_spend and number_of_ad_clicks, the model would get confused. It wouldn’t know how to separate the effect of spending money from the effect of getting clicks, since those two variables move together. This can cause your coefficients to become unreliable or even have strange signs (e.g., a positive coefficient for something that should be negative). The statsmodels summary table provides warnings for this, such as a “high condition number,” which is a red flag that your variables are too similar.

Finally, remember that your model is only as good as the assumptions it’s built on. A linear regression assumes the relationships are, in fact, linear. If the true relationship is a sharp curve (for instance, ad spend has strongly diminishing returns), our straight-line model will be a poor fit and give misleading

156 ❘ CHAPTER 7 PRoBABiliTy And STATiSTiCS foR BuSinESS AnAlyTiCS

answers. Likewise, the model is only valid for the range of data it was trained on. The model, built on prices between $20 and $25, has no idea what would happen if we suddenly raised the price to $100.

Using it to predict far outside the original data is called extrapolation, and it can be a recipe for disaster. While extrapolation can work if the relationship is truly linear and supported by sufficient data, doing so without that certainty can be a recipe for disaster.

Think of your regression model as a powerful but focused flashlight, not an all-seeing crystal ball. It is a tool for quantifying and testing your hypotheses within a set of assumptions. It provides the map and tells you where to look, but it still requires your business judgment to interpret the results and make the final, intelligent decision.

LOGISTIC REGRESSION

In Listing 7-8, we built a powerful model to predict a number: sales. This is known as a regression problem. But what about a different, arguably more common, business question: Will this customer buy (Yes/No)? Or will this customer churn (Yes/No)? This section creates an example around customer churn. Customer churn is the percentage of customers who stop doing business with a company over a specific period, representing the rate at which a business loses its clients.

This is a classification problem. The target variable, y, is not a continuous number, but a discrete category. For this, a linear regression (OLS) model is the wrong tool. A straight line will predict values like 1.5 (150% converted) or −0.2 (−20% churned), which are nonsensical.

You need a model that is fenced in, one that always outputs a value between 0 and 1, just like a probability. The standard tool for this is logistic regression (also known as logit regression).

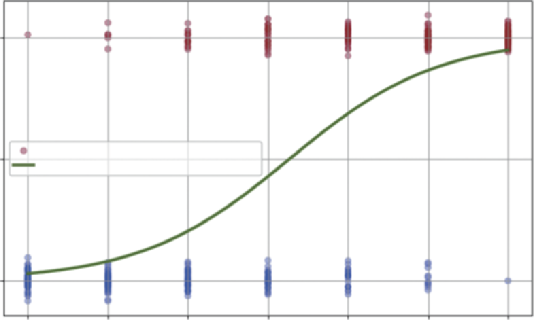

Despite its name, logistic regression is a classification model. It works by fitting a non-linear

“S-shaped” curve (a sigmoid function) to the data. This S-curve translates any input into a probability between 0% and 100%.

The goal is the same as before: to find the coefficients for the variables. We are building a model that looks like this:

Prob ( Churn = 1 ) = f( B 0 + B 1 ) ∗ Age + B 2 ( MonthlyBill ) + B 3 ∗ ( CustomerServiceCalls) Where f is the S-shaped logistic function. B 1 will tell us how much a customer’s age increases the probability of them churning, while B 2 might tell us how a high bill decreases it.

Predicting Customer Churn

Let’s say a telecom company wants to understand why customers are churning. They have data on 1,000 customers and want to know which factors are the biggest warning signs. Listing 7-9 shows a model to find the drivers of churn.

logistic Regression ❘ 157

LISTING 7-9: RUNNING A LOGISTIC REGRESSION FOR CHURN ANALYSIS

import numpy as np

import statsmodels.api as sm

# 1. Generate realistic mock data for 1000 customers

np.random.seed(42)

num_customers = 1000

# Age: Varies between 18 and 80

age = np.random.uniform(18, 80, num_customers)

# Monthly Bill: Varies between $40 and $150

monthly_bill = np.random.uniform(40, 150, num_customers)

# Customer Service Calls: Varies between 0 and 6

customer_service_calls = np.random.randint(0, 7, num_customers)

# --- Define the "True" Churn Logic (hidden from the model) ---

# We create a log-odds formula to simulate reality

log_odds = -6.0 + (0.02 * age) + (0.005 * monthly_bill) + (1.2 *

customer_service_calls)

# Convert log-odds to probability

prob_churn = 1 / (1 + np.exp(-log_odds))

# Simulate the 1/0 (Churn/No Churn) outcome

churned = (np.random.rand(num_customers) < prob_churn).astype(int)

# 2. Prepare the data for Statsmodels

y = churned # Our 'dependent' variable (1s and 0s)

# Create our X matrix (the 'independent' variables)

X = np.column_stack((age, monthly_bill, customer_service_calls))

X = sm.add_constant(X) # Add the intercept (B0)

# 3. Fit the model

# *** This is the key change: sm.Logit instead of sm.OLS ***

model = sm.Logit(y, X).fit()

# 4. Print the full summary table

print(model.summary())

In Listing 7-9, we generate mock data for 1,000 customers. The most complex part is creating our y variable, churned. To do this realistically, we first define a prob_churn for each customer based on their age, bill, and service calls, then run a simple np.random.rand() coin flip to see if that specific customer (with their given probability) actually churned (1) or not (0). We then prepare our data just as we did for OLS. y is our vector of 1s and 0s, and X is our matrix of inputs, including the constant.

Next, we see the crucial difference. Instead of sm.OLS, we use sm.Logit(y, X). This tells statsmodels that our y variable is binary and that it should fit a logistic S-curve instead of a straight line (this is the purpose of the logistic distribution described earlier in the chapter). The fit() command runs the iterative algorithm to find the coefficients that best match the data.

158 ❘ CHAPTER 7 PRoBABiliTy And STATiSTiCS foR BuSinESS AnAlyTiCS

The output summary table looks similar to OLS, but the interpretation is very different: Logit Regression Results

==============================================================================

Dep. Variable: y No. Observations: 1000Model: Logit Df Residuals: 996

Method: MLE Df Model: 3

Date: Tue, 06 Jan 2026 Pseudo R-squ.: 0.4287

Time: 15:33:31 Log-Likelihood: -375.56

converged: True LL-Null: -657.34

Covariance Type: nonrobust LLR p-value: 8.003e-122

==============================================================================

coef std err z P>|z| [0.025 0.975]------------------------------------------------------------------------------

const -5.7615 0.483 -11.919 0.000 -6.709 -4.814

x1 0.0208 0.005 4.014 0.000 0.011 0.031

x2 0.0026 0.003 0.918 0.359 -0.003 0.008

x3 1.1436 0.070 16.374 0.000 1.007 1.281

==============================================================================

Let’s dig into how to read this powerful but tricky summary. The first thing to check is the pseudo R-squared (top right), which is 0.3458. This is not the same as a normal R-squared, but rather a“goodness-of-fit” metric. For a logistic model, a value this high is considered a very strong fit and tells us that our model has significant explanatory power. Next, we can look at the P>|z| (p-values).

Just like in our OLS model, these confirm our findings are real. Since all our variables have p-values of 0.018 or less, we know that age, bill, and service calls are all statistically significant predictors of churn and not just “noise.” Finally, we have the coef (coefficients). This is the most important part, and the most different from OLS. These coefficients are in a unit called log-odds and are not intuitive; a coefficient of 1.2117 does not mean “one service call increases the probability of churn by 121%.” To interpret them, we must convert them into odds ratios by exponentiating them (np.exp(coef)).

You can do that conversion to get the real business insight, using this simple code: odds_ratios = np.exp(model.params)

print(f"const {odds_ratios[0]:.5f}")

print(f"x1 {odds_ratios[1]:.5f} (Age)")

print(f"x2 {odds_ratios[2]:.5f} (Monthly Bill)")

print(f"x3 {odds_ratios[3]:.5f} (Customer Service Calls)")

Running these lines will give the following output:

const 0.00315

x1 1.02102 (Age)

x2 1.00262 (Monthly Bill)

x3 3.13814 (Customer Service Calls)

This output says that, for every one-year increase in a customer’s age, their odds of churning increase by a small but measurable 2.1% (x1 -> 1.021). Similarly, for every $1 increase in their monthly

logistic Regression ❘ 159

bill, the odds of churning increase by just 0.3% (x2 -> 1.003). The big discovery, however, is x3

(Customer_Service_Calls) at 3.138. This means that for every single call a customer makes to customer service, their odds of churning increase by over 214% (i.e., their odds multiply by 3.14). This analysis gives the company a crystal-clear, data-driven mandate: the most powerful predictor of churn is customer service calls. While age and bill matter slightly, the service call is a massive red flag. The model has successfully identified a critical pain point in the customer journey that is directly and significantly linked to customer loss.

While not as straightforward as linear regression, you can also visualize logit regression, as shown in Listing 7-10.

LISTING 7-10: VISUALIZATION OF CUSTOMER CHURN AND LOGISTIC REGRESSION

import matplotlib.pyplot as plt

# --- Visualization ---

plt.figure(figsize=(10, 6))

# Generate the Smooth Curve Data

# We want to plot probability vs. Service Calls.

# Since the model is multivariate, we must hold Age and Bill constant (e.g., at their means) to isolate the effect of calls.

x_curve = np.linspace(0, 6, 100) # Range of calls from 0 to 6

mean_age = np.mean(age)

mean_bill = np.mean(monthly_bill)

# Create a prediction matrix matching the training X structure:

[Constant, Age, Bill, Calls]

X_pred = np.column_stack((

np.ones(100), # Constant

np.full(100, mean_age), # Age (fixed at mean)

np.full(100, mean_bill), # Bill (fixed at mean)

x_curve # Calls (varying)

))

# Get probabilities for the curve

y_curve = model.predict(X_pred)

# Plot the raw data (0s and 1s)

# We add "jitter" (a tiny bit of random noise) to the y-axis so points don't overlap.

y_jitter = y + np.random.normal(0, 0.03, num_customers)

plt.scatter(customer_service_calls, y_jitter, c=y, cmap='coolwarm', alpha=0.3, label='Customer Data (0=No Churn, 1=Churn)')

# Plot the Logistic Regression S-Curve

plt.plot(x_curve, y_curve, color='green', linewidth=3, label='Logistic Regression Curve (Prob)')

160 ❘ CHAPTER 7 PRoBABiliTy And STATiSTiCS foR BuSinESS AnAlyTiCS

plt.title('Logistic Regression: Predicting Churn Probability')

plt.xlabel('Number of Customer Service Calls')

plt.ylabel('Probability of Churn')

plt.yticks([0, 0.5, 1], ['0% (No Churn)', '50%', '100% (Churn)'])

plt.legend()

plt.grid(True)

plt.show()

In Listing 7-10, plt.figure() creates a new canvas for our plot. The most important part is the plt.scatter() command, which plots our raw customer data. A common challenge when visualizing binary (0/1) data is that all the points stack directly on top of each other at the bottom and top of the graph, making it impossible to see the density. To solve this, we first create a y_jitter variable. We add a tiny amount of jitter, a small, random value from np.random.normal—to each 0 and 1. This spreads the dots out vertically just enough for us to see the clusters without changing their meaning. When we call plt.scatter, we pass in c=y_data and cmap='coolwarm'. This is a clever trick to color-code our data: the 0 (no churn) points are plotted in one color (blue) and the 1 (churn) points in another (red). The alpha=0.3 argument makes the dots semi-transparent, which helps visualize where the data is most dense.

After plotting the raw data, we overlay our model’s prediction using plt.plot(x_curve, y_curve).

This draws the smooth, green S-curve we calculated in the previous step, representing the predicted probability of churn for any given number of service calls.

Finally, the rest of the code formats the chart for clarity. We add a title and axis labels. The most important formatting line is plt.yticks([0, 0.5, 1], ['0% (No Churn)', '50%', '100%

(Churn)']). This re-labels the y-axis ticks so that instead of seeing 0 and 1, the manager sees a much more intuitive 0% and 100% probability, making the chart’s purpose as a probability forecaster immediately clear.

statsmodels logit function is doing.

Logistic Regression: Predicting Churn Probability

100% (Churn)

n

y of Chur

50%

Customer Data (0 = No Churn, 1 = Churn)

Logistic Regression Curve (Probability)

robabilitP

0% (No Churn)

0

1

2

3

4

5

6

Number of Customer Service Calls

FIGURE 7-7: Logistic regression.

forecasting ❘ 161

The dots are our raw data. The cluster of dots at the bottom represents all the customers who did not churn (0). The cluster of dots at the top represents all the customers who churned (1). You can visually see that at zero or one calls, most dots are blue, while at five or six calls, most dots are red.

The S-curve is the fitted model. It’s the “best fit” line that separates the two clusters. It shows the predicted probability of churn for any number of calls. At one call, the line is very low (around ~10%

probability). It crosses the 50% mark somewhere between three and four calls, which the model identifies as the “point of indifference.” By six calls, the model predicts an almost 90% probability of churn. This S-curve is the core of logistic regression.

While this example focuses on a binary outcome, it is important to note that logistic regression can also be extended to handle situations with more than two categories. This is known as multinomial logistic regression. For instance, instead of just predicting whether an employee leaves, you could predict where they go (e.g., competitor, start-up, retirement, or stay). Although the mathematical formulation is more complex and beyond the scope of this introductory chapter, the core concept remains the same—the model calculates the probability of an observation falling into each possible category based on the input variables.

FORECASTING

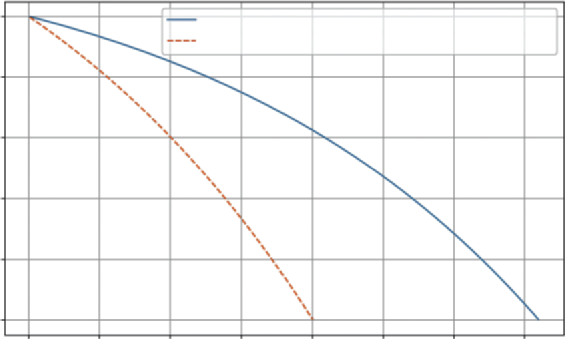

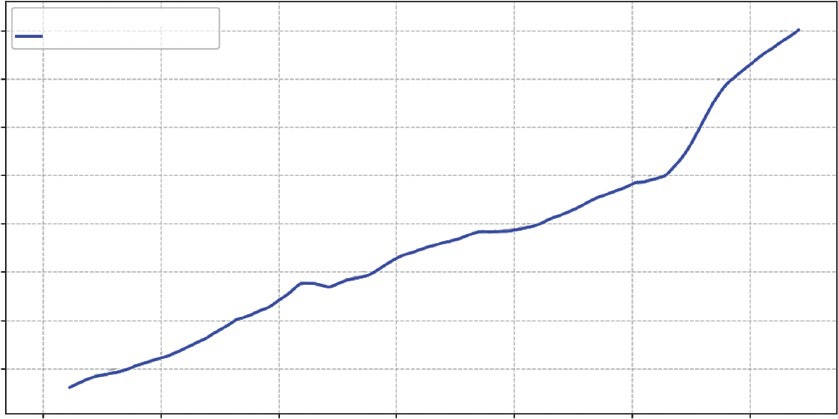

The previous sections focused on analyzing the present (is Version B better?) and explaining the past (what drove sales?). Now, we turn our gaze to the future. Forecasting is one of the most critical tasks in business analytics. Whether predicting next quarter’s revenue or estimating future market trends, leaders need to know where the trend is heading.

To build this forecast, we will use linear regression. Specifically, we will look at the relationship between time and our metric of interest (e.g., sales):

➤

The trend: We fit a straight line to historical data to find the underlying growth rate.

➤

The prediction interval: A forecast without a measure of risk is dangerous. We need to calculate the “spread” of the historical data around that trend line to create a “cone of uncertainty” for the future. This tells the business not just the most likely outcome, but the reasonable worst-case scenario.

This example uses scipy.stats.linregress, a straightforward and powerful tool for simple trend analysis.

Imagine we have 100 days of historical sales data. The data is noisy, some days are up, some are down, but there is a general upward trend. We want to forecast sales for the next 30 days and visualize the risk. We do this in Listing 7-11.

LISTING 7-11: SALES FORECASTING WITH PREDICTION INTERVALS

import numpy as np

import matplotlib.pyplot as plt

from scipy import stats

162 ❘ CHAPTER 7 PRoBABiliTy And STATiSTiCS foR BuSinESS AnAlyTiCS

# 1. Generate Historical Data (100 Days)

np.random.seed(42) # For reproducibility

days = np.arange(100)

# Trend: Base 500 sales + 2 sales/day growth + random noise

historical_sales = 500 + (2 * days) + np.random.normal(0, 50, 100)

# 2. Fit the Trend Line (Linear Regression)

# linregress finds the best-fit line for our data

# slope: growth per day

# intercept: starting sales

# p_value: tells us if the trend is statistically significant

slope, intercept, r_value, p_value, std_err = stats.

linregress(days, historical_sales)

print(f"Trend Analysis:")

print(f" Growth (Slope): {slope:.2f} sales per day")

print(f" P-Value (is trend real?): {p_value:.4f}")

# 3. Forecast the Future (Next 30 Days)

future_days = np.arange(100, 130)

future_sales_projection = slope * future_days + intercept

# 4. Calculate Prediction Intervals (The Risk Cone)

# First, find the errors (residuals) of the historical data

hist_predictions = slope * days + intercept

hist_errors = historical_sales - hist_predictions

# Calculate the standard deviation of these errors

# This represents our model's historical "off-by" amount

error_std = np.std(hist_errors)

# A 95% prediction interval is roughly 1.96 * standard error

prediction_margin = 1.96 * error_std

upper_bound = future_sales_projection + prediction_margin

lower_bound = future_sales_projection - prediction_margin

# --- Visualization ---

plt.figure(figsize=(10, 6))

# Plot history

plt.scatter(days, historical_sales, color='grey', alpha=0.5, label='Historical Data')

# Plot trend line (history)

plt.plot(days, slope * days + intercept, color='blue', label=f'Historical Trend (p={p_value:.3f})')

# Plot forecast

plt.plot(future_days, future_sales_projection, color='green', linestyle='--', label='Sales Forecast')

# Plot Risk Cone (Prediction Interval)

plt.fill_between(future_days, lower_bound, upper_bound, color='green', alpha=0.2, label='95% Prediction Interval')

plt.title('Sales Forecast: Trend vs. Risk')

plt.xlabel('Day')

forecasting ❘ 163