plt.title('Handling Missing Data with Time-Based Interpolation')

plt.xlabel('Date')

plt.ylabel('Sales')

plt.legend()

plt.grid(True, linestyle='--', alpha=0.5)

plt.show()

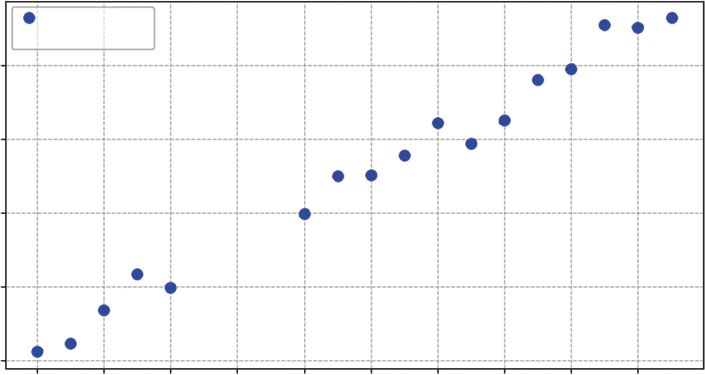

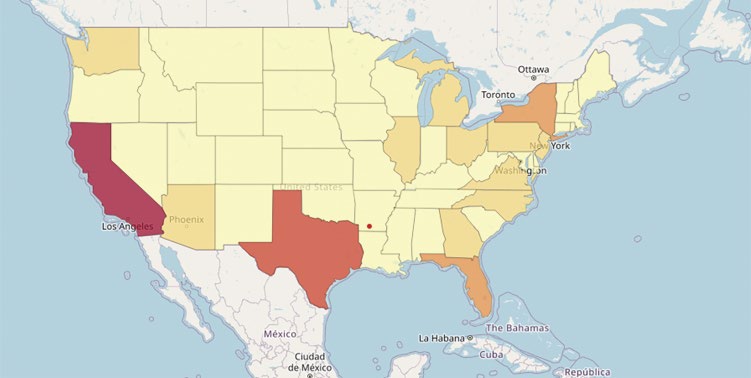

Listing 9-6 uses the .interpolate() function. Specifically, we use method='time'. This is critical for time-series data. It looks at the index (the dates) and draws a line between the valid points surrounding the gap. If the gap is three days long, it calculates the slope needed to get from the start to the end of that three-day period and fills in the missing days accordingly. This preserves the overall trend of the data without introducing artificial dips (like filling with 0) or plateaus (like filling with the mean). The result now is no missing data points. You can visualize what is happening in

The biggest risk with interpolation is hallucinating data. If you have a gap of three months in your sales data, drawing a straight line across it creates 90 days of fake data that implies a smooth, steady trend that likely never existed. As a rule of thumb, interpolate short gaps (to fix glitches) but treat long gaps as separate periods of analysis.

Aggregating and Resampling

Often, the raw data you collect is too granular for strategic decision-making. You might have data recorded every second or every hour, but your stakeholders care about the monthly total. This is where resampling becomes essential. It allows you to change the frequency of your data—for example, converting daily sales into monthly revenue—and define exactly how to aggregate those values, whether by summing them, taking the average, or finding the maximum.

Visualizing Change Over Time (Time-series) ❘ 199

Handling Missing Data with Time-based Interpolation

Observed Data

Interpolated Fill

180

160

alesS 140

120

100

1-01

1-03

1-05

1-07

1-09

1-11

1-13

1-15

1-17

1-19

2024-0

2024-0

2024-0

2024-0

2024-0

2024-0

2024-0

2024-0

2024-0

2024-0

Date

FIGURE 9-2: Handling missing data with time-based interpolation.

This is distinct from the rolling averages used earlier. A rolling average smooths the noise while keeping the original frequency (e.g., daily), whereas resampling fundamentally changes the shape of the data, condensing it into a new timeframe that aligns with your business reporting cycles. In Listing 9-7, daily data is resampled into monthly totals.

LISTING 9-7: RESAMPLING DAILY DATA TO MONTHLY TOTALS

# Make sure to run the previous listings before running this code

# Resample 'D' (Daily) data to 'ME' (Monthly) and calculate the Sum

monthly_sales = clean_data.resample('ME').sum()

print("\n--- Monthly Aggregates ---")

print(monthly_sales.head())

Listing 9-7 uses the .resample('ME') method. This tells Pandas to group our daily data into bins of months. This setting can be set to your data, for example, W for week. We then chain .sum() to tell it how to handle the data in those bins: add it all up. This transforms our 20 rows of daily data into a single row representing the total sales for January. The result of running this code is as follows:

--- Monthly Aggregates ---

Sales

2024-01-31 2967.030383

200 ❘ CHAPTER 9 ILLusTRATIng TImE-sERIEs AnD LInEAR DATA However, .sum() is just one of many aggregation methods available. Depending on your business question, you might choose differently:

➤

.mean(): Useful for finding the average daily performance over a month (e.g., What was our average daily active user count in March?).

➤

.max() or .min(): Essential for capacity planning (e.g., What was the peak server load last week? or What was the lowest inventory level this quarter?).

➤

.last(): Critical for financial data like stock prices or account balances, where you care about the value at the end of the period, not the sum or average.

Choosing the right aggregation function is as important as choosing the right timeframe; summing stock prices would be nonsensical, while averaging total revenue would be misleading.

Boxplots and Histograms

While a line chart shows you when things happened, it can obscure what actually happened in terms of variability. To understand the behavior of your metric, you need to ignore time for a moment and look at the distribution. This answers critical questions such as: Is our server load usually stable at around 50%, or does it swing wildly between 10% and 90%?

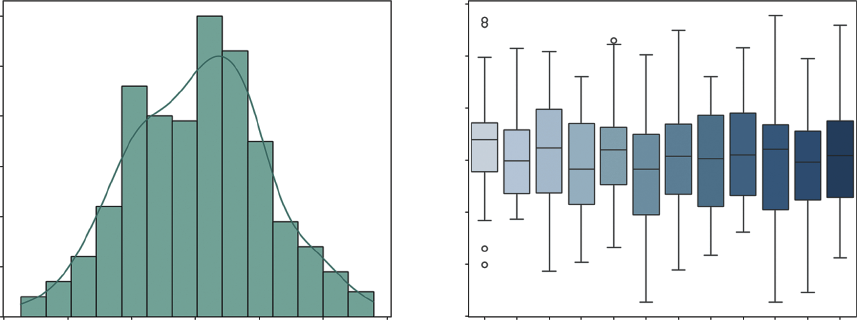

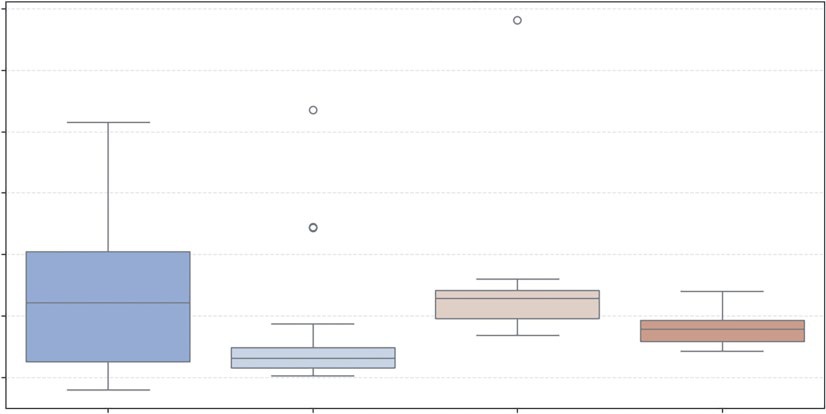

Two visualizations are particularly powerful here. The histogram (often combined with a density plot) shows the overall shape of your data, whether it follows a normal bell curve or is skewed by extreme values. The boxplot is excellent for identifying outliers and visualizing the spread of data across different categories, such as comparing the volatility of sales in January versus July. Listing 9-8 creates this visualization.

LISTING 9-8: VISUALIZING TIME-SERIES DISTRIBUTIONS

import seaborn as sns

# Generate more data for a better distribution visual

np.random.seed(101)

long_dates = pd.date_range(start='2023-01-01', periods=365, freq='D')

# Data with a trend and some seasonality

daily_volatility = np.random.normal(0, 10, 365)

long_ts = pd.DataFrame({'Value': 100 + daily_volatility}, index=long_dates)

# --- Visualization ---

fig, (ax1, ax2) = plt.subplots(1, 2, figsize=(14, 6))

# 1. Histogram with Density (KDE)

sns.histplot(long_ts['Value'], kde=True, ax=ax1, color='teal')

ax1.set_title('Histogram & Density Plot')

ax1.set_xlabel('Daily Value')

# 2. Boxplot by Month (Checking for Seasonal Volatility)

# We add a 'Month' column for grouping

long_ts['Month'] = long_ts.index.month_name().str[:3] # Jan, Feb, Mar...

sns.boxplot(x='Month', y='Value', data=long_ts, ax=ax2, palette="Blues") ax2.set_title('Value Distribution by Month')

Visualizing Change Over Time (Time-series) ❘ 201

plt.tight_layout()

plt.show()

In Listing 9-8, the seaborn library is used. This library builds on top of Matplotlib and makes creating statistical plots much easier. We use sns.histplot to create the histogram on the left, adding a kde=True (kernel density estimate) line to smooth out the shape. For the right chart, we use sns.

boxplot. By setting x='Month', we slice our time-series data into 12 separate buckets, as shown

This visualization allows us to instantly compare the volatility of different months side-by-side, revealing seasonal patterns in variance that a simple line chart would hide.

Seasonality and Autocorrelation

In the previous section, we used a rolling average to smooth out short-term fluctuations so we could see the long-term trend. For many businesses, those fluctuations aren’t just noise, they are critical patterns. A retailer needs to know exactly how much of their December revenue is due to true growth versus just the holiday rush.

To understand these patterns deeply, we need to look at the memory of our time series. Does high sales today predict high sales tomorrow? Does a spike in January always lead to a slump in February?

This is the domain of autocorrelation.

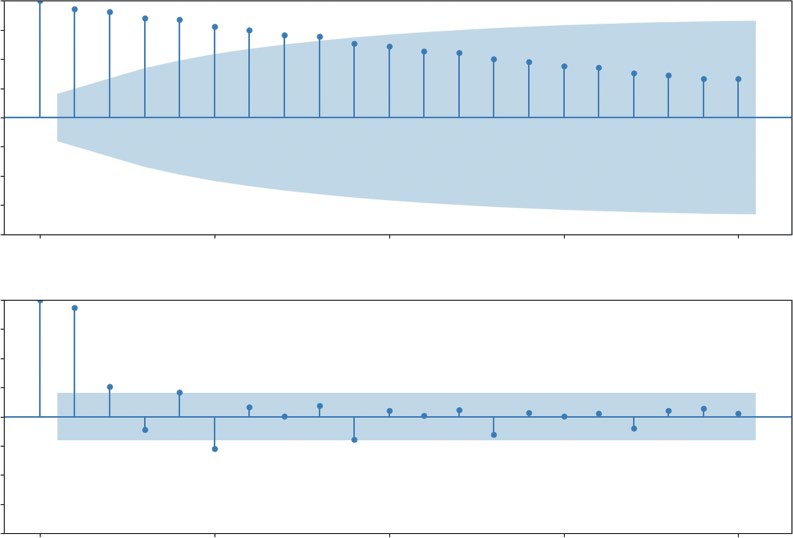

Autocorrelation and Partial Autocorrelation

Autocorrelation function (ACF) is the correlation of a time series with itself at previous time steps, known as lags. Think of it as a measure of the echo in your data. If you shout in a canyon, you hear your voice bounce back a few seconds later. In time-series data, an event today (like a hot summer day) might “echo” into tomorrow’s sales. ACF answers the question: How much does the value at time t depend on the value at time t − 1, t − 2, and so on?

Histogram & Density Plot

Value Distribution by Month

130

60

120

50

110

40

100

30

ount

alue

C

V

20

90

10

80

0

70

70

80

90

100

110

120

130

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec

Daily Value

Month

FIGURE 9-3: Histogram and density plot and value distribution by month.

202 ❘ CHAPTER 9 ILLusTRATIng TImE-sERIEs AnD LInEAR DATA High autocorrelation means the past strongly predicts the future. If today’s sales are high, tomorrow’s sales are also likely to be high. The echo is loud. Seasonal autocorrelation is a specific type of echo that repeats. You might see a strong correlation every seven days (a weekly cycle where Mondays look like other Mondays) or every four quarters (an annual cycle where Q4 always spikes). Partial autocorrelation (PACF) is a slightly more sophisticated tool that helps us isolate the direct cause of a correlation. It measures the correlation between today and a past lag, but crucially, it removes the influence of all the steps in between.

In the real world, data is rarely influenced by a single cycle. Most economic datasets exhibit multiperiodicity, where multiple echoes overlap simultaneously. For example, a retail store might see a daily cycle (evenings are busier than mornings), a weekly cycle (Saturdays outperform Tuesdays), and an annual cycle (the December holiday rush). When we plot these correlations, we aren’t just looking for one spike; we are looking for a complex interference pattern of these different waves. Recognizing multiperiodicity is crucial because it prevents us from misidentifying a short-term weekly spike as a long-term trend.

To see how PACF helps isolate these layers, imagine a heatwave that affects sales. Imagine a five-day scorcher:

Days 1 and 2: Sales are high due to the onset of the heat.

Day 3: Sales remain high, but is this because of Day 1 or simply because Day 2 was hot?

Days 4 and 5: The trend continues.

If we use standard autocorrelation (ACF), Day 1 will appear highly predictive of Day 5. However, this is a false direct link caused by the intervening days (the ripple effect). PACF mathematically controls for Days 2, 3, and 4. It asks: Once we account for the fact that yesterday was hot, does the temperature from four days ago add any new information? If the answer is no, the PACF for that lag will drop to zero. This allows us to ignore the noise of the heatwave and identify the true seasonal lag, perhaps a recurring weekly delivery day, that actually dictates the inventory we need to stock.

To demonstrate this, Listing 9-9 uses a classic economic dataset: Real Personal Consumption Expenditures (PCE). This data tracks how much money American households spend on goods and services, adjusted for inflation. Crucially, we are using the non-seasonally adjusted version. This means the raw data still contains all the natural spikes and drops of the calendar year.

LISTING 9-9: VISUALIZING AUTOCORRELATION IN ECONOMIC DATA

import pandas as pd

import matplotlib.pyplot as plt

from statsmodels.graphics.tsaplots import plot_acf, plot_pacf

# 1. Load the Real Economic Data

# Real Personal Consumption Expenditures (Quarterly)

url = "https://github.com/bkrayfield/Applied-Math-With-Python/raw/refs/heads/main/

Data/ND000349Q.csv"

pce_data = pd.read_csv(url, parse_dates=['observation_date'],

index_col='observation_date')

Visualizing Change Over Time (Time-series) ❘ 203

# 2. Visualization: ACF and PACF

fig, (ax1, ax2) = plt.subplots(2, 1, figsize=(12, 8))

# Plot Autocorrelation (ACF)

# We look at 20 lags (5 years of quarterly data)

plot_acf(pce_data['ND000349Q'], lags=20, ax=ax1)

ax1.set_title('Autocorrelation Function (ACF) - The "Echo"')

# Plot Partial Autocorrelation (PACF)

plot_pacf(pce_data['ND000349Q'], lags=20, ax=ax2)

ax2.set_title('Partial Autocorrelation Function (PACF) - The "Direct Link"') plt.tight_layout()

plt.show()

Listing 9-9 uses statsmodels to generate the ACF and PACF plots. We load the PCE dataset, ensuring the dates are parsed correctly. Then, we create a figure with two subplots. The plot_acf function calculates the correlation between the time series and its lagged versions for up to 20 quarters (five years). Similarly, plot_pacf calculates the partial autocorrelation. You can see the results of

Autocorrelation Function (ACF)—The “Echo”

1.00

0.75

0.50

0.25

0.00

–0.25

–0.50

–0.75

–1.00

0

5

10

15

20

Partial Autocorrelation Function (PACF)—The “Direct Link”

1.00

0.75

0.50

0.25

0.00

–0.25

–0.50

–0.75

–1.00

0

5

10

15

20

FIGURE 9-4: Autocorrelation and partial autocorrelation plots.

204 ❘ CHAPTER 9 ILLusTRATIng TImE-sERIEs AnD LInEAR DATA

CF) shows a very slow decay. The bars remain high and positive for many lags, indicating a strong long-term trend, values don’t change much from one quarter to the next. The bottom chart (PACF) tells a sharper story. The massive spike at Lag 1 indicates that the single best predictor of this quarter’s spending is the immediately preceding quarter.

To elaborate more on why these plots are important, the slow decay in the ACF plot (top chart) is a classic signature of a trend. It tells us that the data points are sticky. For example, if sales were high last quarter, they are likely to be high this quarter, next quarter, and so on. This confirms that the long-term growth we saw in the line chart isn’t an illusion; it’s a statistically significant property of the data. This plot shows how each progressive data point is linked as a chain. The second PACF plot (bottom plot) looks at how specific historical data points affect what happens to future data points.

For example, if the line was larger four quarters ago, then we can conclude what happened one year ago has a direct influence on what happens today.

In short, these plots mathematically demonstrate why we need to use a model that accounts for both trend and seasonality (like seasonal_decompose or SARIMA), rather than a simple average. They move you from guessing there’s a pattern to knowing exactly what that pattern looks like.

Time-series Decomposition

The previous section used a rolling average to smooth out short-term fluctuations so we could see the long-term trend. For many businesses, those fluctuations aren’t just noise, they are critical patterns. A retailer needs to know exactly how much of their December revenue is due to true growth versus just the holiday rush.

To answer this, we use time-series decomposition. This statistical technique takes a single line chart and mathematically splits it into three distinct components:

➤

Trend: The long-term direction (up or down), stripped of all other noise.

➤

Seasonality: The repeating patterns that happen at fixed intervals (e.g., every December, every weekend).

➤

Residuals (noise): The random randomness that remains after the trend and seasonality are removed. This is the “unexplained” part of the data.

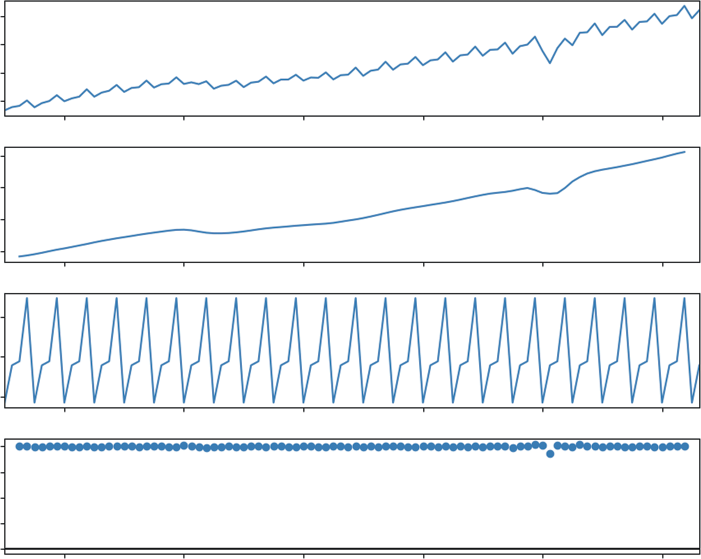

To demonstrate this, we’ll continue with the PCE dataset. Even more important, we are using the non-seasonally adjusted version. This means the raw data still contains all the natural spikes and drops of the calendar year (like the massive surge in spending every Q4 for the holidays). This makes it the perfect candidate for decomposition: we can use Python to find that holiday signal and separate it from the underlying economic growth.

In this analysis, we use a multiplicative model. Unlike an additive model, which assumes seasonal swings are a constant dollar amount, a multiplicative model assumes that seasonal effects are proportional to the trend. As the economy grows, the holiday spike scales up with it. Mathematically, we are representing the data as follows:

Observed = Trend ∗ Seasonality ∗ Residual

Visualizing Change Over Time (Time-series) ❘ 205

We can perform this advanced analysis with a single function from the statsmodels library called seasonal_decompose, shown in Listing 9-10.

LISTING 9-10: VISUALIZING SEASONALITY VS. TREND IN ECONOMIC DATA

import pandas as pd

import matplotlib.pyplot as plt

from statsmodels.tsa.seasonal import seasonal_decompose

# 1. Load the Real Economic Data

# Real Personal Consumption Expenditures (Quarterly)

url = "https://github.com/bkrayfield/Applied-Math-With-Python/raw/refs/heads/main/

Data/ND000349Q.csv"

pce_data = pd.read_csv(url, parse_dates=['observation_date'],

index_col='observation_date')

# 2. Run the Decomposition

# We specify 'period=4' because the data is Quarterly (4 points per year)

# model='multiplicative' is often better for economic data where volatility grows with the trend,

# but 'additive' is easier to interpret for a first example.

result = seasonal_decompose(pce_data['ND000349Q'], model=' multiplicative', period=4)

# 3. Visualization

# The result object has a built-in .plot() function that creates a 4-panel chart fig = result.plot()

fig.set_size_inches(10, 8) # Make it large enough to read

plt.suptitle('Decomposing Personal Consumption Expenditures (PCE)', fontsize=16, y=1.02)

plt.show()

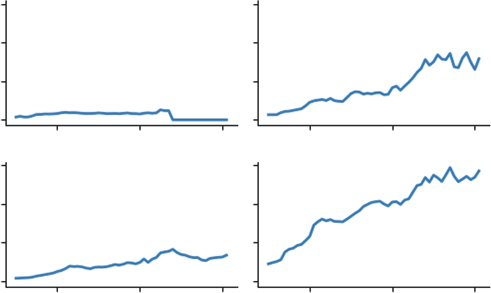

In Listing 9-10, the core analysis happens with the seasonal_decompose function. We pass it two critical arguments. First, model='multiplicative' tells the function to treat the seasonal component as a percentage or factor of the trend. This is a more realistic x-ray for the PCE data because, over several decades, U.S. spending has increased significantly; it makes sense that the holiday surge in 2023 is much larger in absolute dollars than it was in 1970, even if the percentage of growth remains similar. Second, period=4 informs the algorithm that our data is quarterly, identifying the pattern that repeats every four observations.

The top panel, Observed, shows the raw, jagged path of the economy. The second panel, Trend, reveals the smooth underlying growth, clearly highlighting structural shifts like the flattening during the 2008 financial crisis.

The third panel, Seasonal, is where the multiplicative logic shines. Instead of showing dollar amounts, it shows a ratio. A value of 1.05 in Q4 would indicate that spending is 5% higher than the trend due to the season. This consumer heartbeat remains consistent even as the economy scales. Finally, the Residuals panel shows the noise. Because this is a multiplicative model, the residuals are centered on 1.0 rather than 0. Any sharp deviations from 1.0 represent shocks to the system, unpredictable events like the 2020 pandemic lockdowns that disrupted both the trend and the expected seasonal rhythm.

206 ❘ CHAPTER 9 ILLusTRATIng TImE-sERIEs AnD LInEAR DATA

Decomposing Personal Consumption Expenditures (PCE)

ND000349Q

4000

3500

3000

2500

4000

3500

Trend 3000

2500

1.02

1.00

Seasonal 0.98

1.00

0.75

esid 0.50

R 0.25

0.00

2004

2008

2012

2016

2020

2024

FIGURE 9-5: A time-series decomposition of U.S. consumer spending.

PANEL DATA

Finally, we turn to the most complex data structure: panel data. This is data that tracks multiple subjects over multiple time periods. Imagine a dataset tracking the price of energy across different sectors (residential, commercial, industrial) and fuel types over 50 years.

If you try to plot this on a single line chart, you get a spaghetti chart, a tangled mess of overlapping lines that is impossible to read. The solution is small multiples (or faceting). Instead of one big chart, you create a grid of smaller charts, one for each category. This allows the eye to easily compare trends without visual clutter.

Listing 9-11 creates small multiples using data composed of energy prices by sectors. We use a dataset of energy prices in New York State from 1970 to 2022. It contains prices for different fuel types (natural gas, electricity) across different economic sectors.

Panel Data ❘ 207

LISTING 9-11: CREATING SMALL MULTIPLES WITH SEABORN

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

# 1. Load the Raw Data

energy_data = pd.read_csv(url)

# 2. Reshape from "Wide" to "Long" Format

# The data has fuel types as separate columns (Coal, Natural Gas, etc.).

# Seaborn prefers "Long" format: one column for "Fuel Type" and one for "Price".

fuel_cols = ['Coal', 'Propane', 'Natural Gas', 'Electricity']

energy_long = energy_data.melt(

id_vars=['Year', 'Sector'], # Identifiers to keep

value_vars=fuel_cols, # Columns to unpivot

var_name='Fuel Type', # New column name for headers

value_name='Price' # New column name for values

)

# 3. Clean the Price Column

# Prices have '$' signs (e.g., "$1.09"), so pandas reads them as strings/objects.

# We must remove the '$' and convert to float.

energy_long['Price'] = energy_long['Price'].astype(str).str.

replace('$', '', regex=False)

energy_long['Price'] = pd.to_numeric(energy_long['Price'], errors='coerce')

# Filter for just "Residential" to make the chart focused

residential_energy = energy_long[energy_long['Sector'] == 'Residential']

# 4. Create Small Multiples

g = sns.relplot(

data=residential_energy,

x="Year", y="Price",

col="Fuel Type", # Create a separate chart for each Fuel Type kind="line",

col_wrap=2, # Start a new row after 3 charts

height=2, aspect=1.5,

linewidth=2

)

# Add Titles

g.fig.suptitle('Residential Energy Price Trends (Per Million BTU1970-2021)', y=1.02, fontsize=16)

plt.show()

In Listing 9-11, we use seaborn’s relplot function, which is designed specifically for handling complex, multi-dimensional data. The key argument is col="Fuel Type". This tells seaborn to slice the data by fuel type and automatically generate a separate subplot for each one. The col_wrap=2 code

208 ❘ CHAPTER 9 ILLusTRATIng TImE-sERIEs AnD LInEAR DATA

Residential Energy Price Trends (Per Million BTU, 1970–2021)

Fuel Type = Coal

Fuel Type = Propane

60

40

riceP 20

0

Fuel Type = Natural Gas

Fuel Type = Electricity

60

40

riceP 20

0

1980

2000

2020

1980

2000

2020

Year

Year

FIGURE 9-6: Residential energy prices.

ensures the charts are arranged in a neat grid rather than in one long row. You can see this visual in

This visualization technique reveals insights that would be hidden in a single chart. We can instantly see that electricity prices have been relatively stable and high, while fuel oil and propane prices are extremely volatile, spiking dramatically during geopolitical crises. By separating the signals, we respect the complexity of the data while keeping the story clear.

SUMMARY

This chapter explored the critical role of data visualization in translating raw numbers into actionable business insights. It established that the first step in any visualization task is diagnosing the fundamental structure of the data: cross-sectional (a snapshot in time), time-series (a historical sequence), or panel data (multidimensional history). The chapter demonstrated how identifying these structures allows analysts to select the appropriate visualization strategy, whether utilizing bar charts for direct comparisons, line charts for analyzing trends, or faceted plots to untangle complex, multi-subject datasets.

It then focused heavily on the mechanics of working with time-series data, utilizing Python’s Matplotlib and Pandas libraries to move beyond simple plotting. You learned about techniques for revealing long-term signals amid short-term noise using rolling averages and addressed common real-world data issues by applying time-based interpolation to repair missing values. The chapter also examined how to change the temporal resolution of data through resampling, aggregating granular observations into meaningful business cycles, and how to visualize volatility and distribution using histograms and boxplots.

Finally, the chapter introduced advanced diagnostic techniques to mathematically quantify the patterns hidden within time-series data. It utilized autocorrelation (ACF) and partial autocorrelation

Continue Your Learning ❘ 209

(PACF) to measure the memory or echo within a dataset and applied time-series decomposition to separate data into its constituent parts: trend, seasonality, and residual noise. The chapter concluded by applying these principles to panel data, using seaborn to create organized, comparative views that prevent visual clutter and highlight relationships across different categories over time.

CONTINUE YOUR LEARNING

Data visualization and time-series analysis are disciplines that bridge the gap between engineering and art. While this chapter provided the technical foundation for revealing patterns in noise, mastering the aesthetic and theoretical sides of these topics will make your analysis significantly more persuasive.

The following resources are curated to help you master the libraries we used and deepen your theoretical understanding:

Official Documentation

➤

Matplotlib: The foundational library for Python plotting. The gallery section is particularly useful for finding code snippets for specific chart types.

➤

Seaborn: The high-level interface for statistical graphics. Their tutorial on visualizing statistical relationships is excellent for understanding panel data and complex distributions.

➤

Pandas Time Series: The definitive guide to the functionality that makes Python so powerful for financial and economic analysis, covering offsets, shifting, and frequency conversion.

➤

Statsmodels Time Series analysis: Deep documentation for the advanced components we touched on, such as decomposition, stationarity tests, and autocorrelation.

Recommended Reading

➤

Storytelling with Data by Cole Nussbaumer Knaflic: This book focuses less on code and more on the design principles for creating charts that effectively communicate a message to stakeholders. It is essential reading for the “last mile” of analytics.

➤

Python for Data Analysis by Wes McKinney: Written by the creator of Pandas, this book offers the most in-depth look at the mechanics of data manipulation, particularly for time-series operations and cleaning messy datasets.

➤

Effective Pandas 2 by Matt Harrison: A standard in the field for learning idiomatic Pandas and data manipulation patterns. This is an excellent resource for those looking to write

“Treading on Python” style code, with updated editions covering the latest features in Pandas.

➤

Forecasting: Principles and Practice by Hyndman and Athanasopoulos: While the code examples are in R, this is widely considered the gold standard textbook for the theory behind time-series decomposition and forecasting.

210 ❘ CHAPTER 9 ILLusTRATIng TImE-sERIEs AnD LInEAR DATA In addition to generating charts, you will frequently need to manipulate the shape of your time-series data to prepare it for analysis. T

Pandas and Statsmodels used to structure, smooth, and diagnose temporal data.

TABLE 9-1: Essential Visualization and Time-series Functions FUNCTION/METHOD

LIBRARY

DESCRIPTION

.to_datetime()

Pandas

Converts string arguments to datetime

objects. The critical first step for any

time-series analysis.

.set_index()

Pandas

Moves a column (usually the date) to the

DataFrame index, enabling time-aware

slicing and plotting.

.rolling(window=n).mean()

Pandas

Calculates a moving average over a

specified window n to smooth out

short-term noise and reveal trends.

.interpolate(method='time')

Pandas

Fills missing values (NaN) by drawing a

line between existing points, respecting

the time distance between them.

.resample(rule).func()

Pandas

Changes the frequency of the data (e.g.,

Daily to Monthly). Must be chained with

an aggregation function like.sum() or.

mean().

.shift(periods=n)

Pandas

Shifts the index by n periods. Essential

for calculating percent changes or

creating lag features for models.

seasonal_decompose()

Statsmodels

Mathematically separates a time series

into three distinct components: Trend,

Seasonality, and Residuals.

plot_acf() / plot_pacf()

Statsmodels

Visualizes autocorrelation and partial

autocorrelation to diagnose the “mem-

ory” and cyclic dependency in the data.

sns.relplot(kind='line')

Seaborn

The primary function for creating “small

multiples” (faceted plots) to visualize

panel data without clutter.

10

Illustrating Cross-sectional Data

If time-series analysis, covered in the previous chapter, is akin to watching a movie of your business history, cross-sectional analysis is like examining a high-resolution photograph. You are freezing time to look at the relationships between different entities at a single, distinct moment. In the previous chapter, we asked how we got here. This chapter pivots to an equally critical question—where are we right now?

Cross-sectional visualization allows you to ignore the timeline and focus on structure. It answers questions of rank, such as identifying which product is the current bestseller; questions of distribution, such as determining if your customer base is predominantly young or old; and questions of correlation, such as asking if higher advertising spend actually leads to higher sales volume. In this chapter, you will master the art of comparison using bar charts, explore the shape of data using histograms and boxplots, and reveal hidden relationships between variables using scatterplots.

DATA CATEGORIES

Business questions often revolve around structure rather than magnitude. We need to understand the makeup of our data. This is the domain of categorical analysis, specifically part-to-whole comparisons. Whether you are breaking down a marketing budget, analyzing market share, or looking at the inventory mix of a warehouse, the goal is to visualize how multiple small parts combine to form the total picture. The following sections explore how to answer these questions using visuals.

The Pie Chart

When the analytical question shifts from ranking to composition, we are no longer looking for the highest value; we are looking for the share of the total. We want to know how a specific entity, like a budget, a market, or a dataset, is divided into its constituent parts. The fundamental tool for this is the pie chart.

212 ❘ CHAPTER 10 IllusTRATIng CRoss-sECTIonAl DATA The pie chart represents a single categorical variable as a circle, where the entire area corresponds to 100% of the data. The circle is sliced into sectors, with the arc length and angle of each slice proportional to the category’s contribution to the whole. It provides stakeholders with an immediate, intuitive sense of proportion, allowing them to quickly identify dominant categories without reading specific numbers.

Listing 10-1 visualizes the composition of our vegetable dataset (from Chapter 9) to see the breakdown of different forms (Fresh, Canned, Frozen, etc.). As a reminder, the vegetable dataset is a cross-sectional dataset showing the prices of different vegetables and the manner they are delivered (form: like Frozen or Fresh).

NOTE Note that Listing 10-1 uses a CSV file called Vegetable-Prices-2022.

csv found on GitHub. This file, along with the files used in the other listings in this chapter, are also included in the downloadable files for this book, located on the Wiley site at .

LISTING 10-1: THE STANDARD PIE CHART

import matplotlib.pyplot as plt

import pandas as pd

import seaborn as sns

# Load the Data

url = "https://github.com/bkrayfield/Applied-Math-With-Python/raw/refs/heads/main/

Data/Vegetable-Prices-2022.csv"

veg_prices = pd.read_csv(url)

# 1. Prepare the Data

# We count the frequency of each form to get the 'parts' of the whole form_counts = veg_prices['Form'].value_counts()

# 2. Visualization

plt.figure(figsize=(8, 8))

# We use Matplotlib's native pie function

plt.pie(

form_counts,

labels=form_counts.index,

autopct='%1.1f%%', # Format the percentages (e.g., 15.5%)

startangle=140, # Rotate the chart to a pleasing angle

colors=sns.color_palette('pastel') # Use a soft color palette

)

plt.title('Composition of Vegetable Forms', fontsize=16)

plt.show()

In Listing 10-1, we utilize Matplotlib’s .pie() function. Unlike bar or scatterplots, which require X

and Y coordinates, a pie chart requires only a single array of numerical values (form_counts). The

Data Categories ❘ 213

function automatically calculates the total sum and determines the angle for each slice. We utilize the autopct parameter to overlay the calculated percentages directly onto the chart; the string format

'%1.1f%%' instructs Python to display the number as a float with one decimal place followed by a percent sign. Finally, startangle=140 allows us to rotate the entire chart, ensuring that the labels are positioned in the most readable orientation.

This figure utilizes a pie chart to visualize the composition of the dataset by vegetable form, providing an immediate “part-to-whole” comparison. The chart reveals that fresh vegetables dominate the dataset, accounting for nearly half of all items at 45.2%.

The remaining half is split between processed forms, with canned vegetables representing 25.8%, frozen vegetables representing 20.4%, and dried vegetables making up the smallest portion at 8.6%.

This distribution clearly indicates that the dataset is balanced roughly 50/50 between fresh produce and preserved alternatives.

We can further customize the pie chart to emphasize specific insights or improve aesthetics. If a particular category requires immediate attention, such as highlighting the prevalence of fresh vegetables, we can use the explode parameter. This argument accepts a collection of values corresponding to the slices; setting a non-zero value (e.g., 0.1) for a specific slice will “explode” or offset it from the center, isolating it visually. Additionally, analysts often prefer a donut chart variation, which can be achieved in Matplotlib by adding the wedgeprops argument (e.g., wedgeprops={'width': 0.5}).

This hollows out the center, reducing the visual mass of the chart and shifting the focus to the length of the arcs rather than the total area.

Donut Charts

In the business world, the pie chart is ubiquitous. It is the default choice for showing part-to-whole composition, such as market share or budget allocation. However, among data scientists and Composition of Vegetable Forms

Frozen

Dried

20.4%

8.6%

25.8%

Canned

45.2%

Fresh

FIGURE 10-1: Pie chart of vegetables.

214 ❘ CHAPTER 10 IllusTRATIng CRoss-sECTIonAl DATA visualization experts, it is viewed with skepticism. The criticism is not just aesthetic; it is functional.

Pie charts are notoriously difficult to read with precision. When slices are similar in size, it is nearly impossible for a viewer to distinguish the difference based on the angle alone. Furthermore, comparing slices that are not adjacent requires the viewer to mentally rotate the shapes, increasing cognitive load and the likelihood of error.

Despite these limitations, stakeholders often demand them because they provide an immediate, intuitive sense of “wholeness” that a bar chart lacks. If you must use a circular visualization, the donut chart is a superior alternative. By removing the center, you remove the most difficult aspect of the chart to interpret, the angles at the vertex. This forces the eye to compare the arc lengths of the outer ring, which is slightly more intuitive. Additionally, the empty center provides valuable real estate to display a summary statistic, such as the grand total, making the chart more information-dense.

Listing 10-2 visualizes the composition of our vegetable dataset to show the proportion of items that are fresh versus canned versus frozen.

LISTING 10-2: CREATING A DONUT CHART WITH MATPLOTLIB

import matplotlib.pyplot as plt

import pandas as pd

# Load the Data

url = "https://github.com/bkrayfield/Applied-Math-With-Python/raw/refs/heads/main/

Data/Vegetable-Prices-2022.csv"

veg_prices = pd.read_csv(url)

# 1. Prepare the Data

# Count the frequency of each form

form_counts = veg_prices['Form'].value_counts()

# 2. Visualization

plt.figure(figsize=(8, 8))

# Create the Pie Chart

# autopct formats the values as percentages (e.g., '12.5%')

# startangle=90 rotates the start to the top (12 o'clock)

plt.pie(

form_counts,

labels=form_counts.index,

autopct='%1.1f%%',

startangle=90,

colors=sns.color_palette('pastel'),

wedgeprops={'edgecolor': 'white', 'linewidth': 2}

)

# 3. Transform into a Donut

# We draw a white circle in the center to cover the middle

centre_circle = plt.Circle((0, 0), 0.70, fc='white')

fig = plt.gcf()

fig.gca().add_artist(centre_circle)

Data Categories ❘ 215

plt.title('Distribution of Vegetable Forms in Dataset', fontsize=16)

plt.show()

In Listing 10-2, we rely on Matplotlib’s foundational .pie() function. The transformation into a donut is a visual hack. Matplotlib does not have a native donut function. Instead, we create a standard pie chart and then instantiate a plt.Circle object. The arguments (0, 0) and 0.70 place the circle at the origin with a radius of 0.7 (covering 70% of the pie). We then access the current figure using plt.gcf() and “add the artist” (the circle) on top of the existing plot. This technique creates the modern ring aesthetic while preserving the underlying statistical proportions.

The .pie() function offers several parameters to refine the presentation of categorical data beyond basic slices. You can use explode to pass an array of offsets that pull specific slices away from the center, which is ideal for highlighting a particular data point. The wedgeprops dictionary allows for fine-grained control over the slices themselves, such as setting edgecolor and linewidth to create clear boundaries or using width to transform the pie into a donut chart. Additionally, textprops can be used to modify the font size and color of labels, while the normalize parameter ensures that the data scales correctly to a full circle even if the input values don’t sum to 1 or 100.

format. By removing the center, the visualization shifts the viewer’s focus from the angles at the vertex to the arc lengths of the outer ring, which many find easier to compare. The statistical breakdown remains identical; fresh vegetables comprise the clear majority at 45.2%, followed by canned at 25.8%, frozen at 20.4%, and dried at 8.6%, but the inclusion of whitespace in the middle produces a cleaner aesthetic and eliminates the visual clutter where the slices would normally converge.

Distribution of Vegetable Forms in Dataset

Dried

8.6%

Frozen

20.4%

Fresh

45.2%

25.8%

Canned

FIGURE 10-2: Donut plot.

216 ❘ CHAPTER 10 IllusTRATIng CRoss-sECTIonAl DATA The .pie() function offers several parameters to further refine the presentation of categorical data beyond basic slices. You can use the explode parameter to pass an array of offsets that pull specific slices away from the center, which is ideal for highlighting a particular data point like the dominant Fresh category. The wedgeprops dictionary allows for fine-grained control over the slices themselves; beyond setting the edgecolor and linewidth to create clear boundaries, you can also use the width key here to create a donut chart natively (without the circle overlay hack). Additionally, textprops can be used to modify the font size and color of labels, while the normalize parameter ensures that the data scales correctly to a full circle even if the input values don’t sum to exactly 1 or 100.

Stacked Bar Charts

While pie and donut charts are effective for high-level summaries, they can be inefficient when space is limited or when you need to compare multiple compositions side-by-side. In these instances, the stacked bar chart offers a distinct advantage. It functions effectively as a linear pie chart, unrolling the circle into a single rectangular bar.

This transformation allows viewers to judge proportions based on length, a task the human eye performs with high accuracy, rather than angle or area. Furthermore, stacked bars are exceptionally space-efficient. They allow you to display complex part-to-whole relationships within the compact rows of a table or a tight dashboard panel, where a circular chart would be too bulky to be legible.

Listing 10-3 utilizes Matplotlib’s core primitives to build a custom visualization. This is necessary because a stacked bar is technically just a series of standard bars placed end-to-end.

LISTING 10-3: THE STACKED BAR (A BETTER ALTERNATIVE)

import matplotlib.pyplot as plt

import pandas as pd

# Load the Data

url = "https://github.com/bkrayfield/Applied-Math-With-Python/raw/refs/heads/main/

Data/Vegetable-Prices-2022.csv"

veg_prices = pd.read_csv(url)

# 1. Create a Frequency Table (Composition Data)

# We count how many vegetables exist for each form

composition = veg_prices['Form'].value_counts().reset_index()

composition.columns = ['Form', 'Count']

# Calculate Percentage

total = composition['Count'].sum()

composition['Percentage'] = (composition['Count'] / total) * 100

# 2. Visualization

plt.figure(figsize=(10, 2))

# Create a horizontal stacked bar

# We use the 'Percentage' column for the width so the axis spans 0-100

left = 0

Correlations and Distributions ❘ 217

for i, row in composition.iterrows():

plt.barh(

y=0,

width=row['Percentage'],

left=left,

label=f"{row['Form']} ({row['Percentage']:.0f}%)"

)

left += row['Percentage']

plt.title('Dataset Composition: Vegetable Forms by Percentage')

plt.yticks([]) # Remove y-axis ticks

plt.xlabel('Percentage of Total (%)')

plt.xlim(0, 100) # Force the axis to end exactly at 100

# Adjust legend placement

# bbox_to_anchor moves the legend relative to the anchor point

plt.legend(ncol=4, loc='upper center', bbox_to_anchor=(0.5, -0.35), frameon=False)

# Explicitly add space at the bottom for the legend

plt.subplots_adjust(bottom=0.45)

plt.show()

For this stacked bar chart, we manage the placement of the bars using the left variable, which acts as an accumulator. It starts at 0 and increments by the width of each bar (left +=

row['Percentage']) after every iteration of the loop. This ensures that the start of the next bar aligns perfectly with the end of the previous one.

Critically, Listing 10-3 visualizes the percentage of vegetable forms (Canned, Fresh, or Frozen), not the raw count. By setting width=row['Percentage'] and enforcing plt.xlim(0, 100), we normalize the visual scale, guaranteeing the bar spans exactly from 0 to 100 units regardless of the dataset size.

Finally, we address the common issue of legend overlap using plt.subplots_adjust(bottom=0.45).

This command essentially shrinks the height of the chart content within the window, creating a reserved margin of whitespace at the bottom where the legend can reside without obstructing the data or being clipped by the window frame.

This figure visualizes the dataset composition using a horizontal stacked bar chart, essentially unrolling the previous pie chart into a single linear track. The entire length of the bar represents 100% of the data, segmented by color to show the relative contribution of each form. Fresh vegetables clearly dominate the distribution, occupying the first 45% of the bar, followed by canned at 26%, frozen at 20%, and dried at 9%. This layout facilitates a direct comparison of segment lengths along the x-axis, offering a more precise and space-efficient alternative to circular charts for gauging part-to-whole relationships.

CORRELATIONS AND DISTRIBUTIONS

The most common task in business analytics is ranking. You are often presented with a categorical list, sales reps, product lines, or store locations, and the immediate business need is to identify who is

218 ❘ CHAPTER 10 IllusTRATIng CRoss-sECTIonAl DATA Dataset Composition: Vegetable Forms by Percentage

0

20

40

60

80

100

Percentage of Total (%)

Fresh (45%)

Canned (26%)

Frozen (20%)

Dried (9%)

FIGURE 10-3: Stacked bar chart.

outperforming the pack and who is lagging behind. While a spreadsheet or a table offers precision to the umpteenth decimal place, it fails at pattern recognition. To find the maximum value in a table of 50 states, your brain must read 50 individual numbers, hold them in short-term memory, and compare them. A visual comparison makes the maximum obvious in milliseconds. Two ways to do visual comparisons are bar charts and boxplots.

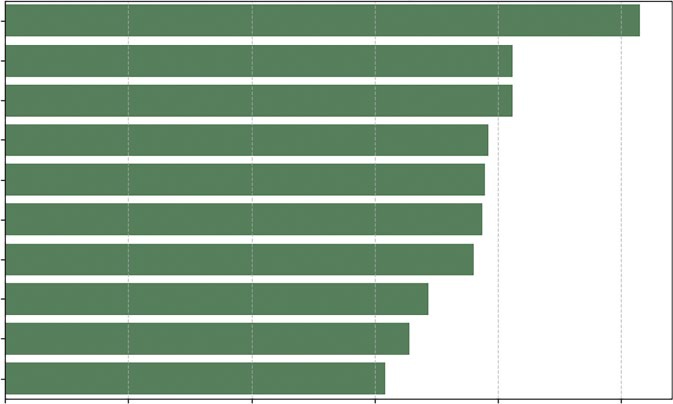

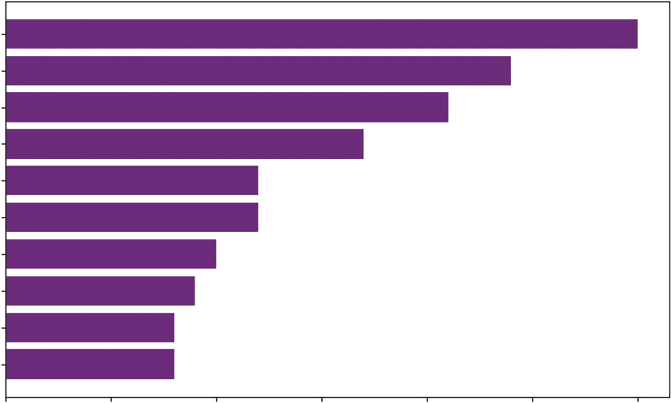

Bar Charts

The bar chart (sometimes called a bar plot) is the undisputed workhorse for this type of cross-sectional comparison. However, a common mistake that analysts make is sticking to the default vertical alignment found in most software. When your category names are long, for example, “Enterprise Software License” versus “Consumer App,” vertical labels often overlap, become unreadable, or get rotated 90 degrees, forcing the readers to tilt their heads to read the axis.

To solve this, we apply a simple heuristic: if you have more than five categories, or if your category names are long, use a horizontal bar chart. Furthermore, the order of the bars matters immensely.

An unsorted bar chart is just a column forest that forces the eye to jump back and forth to compare heights. Sorted bars create a staircase effect, allowing the viewer to instantly group the high-cost items versus the low-cost items. Another solution to this issue could be to rotate the labels 45 degrees.

Listing 10-4 creates a hierarchal bar chart of the vegetable data we introduced earlier in this chapter to compare retail prices effectively.

LISTING 10-4: THE HORIZONTAL BAR CHART

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

# 1. Load the Data

url = "https://github.com/bkrayfield/Applied-Math-With-Python/raw/refs/heads/main/

Data/Vegetable-Prices-2022.csv"

veg_prices = pd.read_csv(url)

# 2. Prepare the Data

# Filter for just "Fresh" vegetables to make a fair comparison

Correlations and Distributions ❘ 219

# and sort the values to create a logical "staircase" visual fresh_veg = veg_prices[veg_prices['Form'] == 'Fresh'].sort_values('RetailPrice', as cending=False).head(10)

# 3. Visualization

plt.figure(figsize=(10, 6))

# We use orient='h' for horizontal bars to accommodate long labels

sns.barplot(

data=fresh_veg,

x='RetailPrice',

y='Vegetable',

color='seagreen'

)

plt.title('Top 10 Most Expensive Fresh Vegetables (2022)', fontsize=14) plt.xlabel('Price per Pound ($)')

plt.ylabel('') # Remove the y-label as it's self-explanatory

plt.grid(axis='x', linestyle='--', alpha=0.7)

plt.show()

In Listing 10-4, the code begins with a rigorous data preparation phase using Pandas chained operations. We first apply a Boolean mask [veg_prices['Form'] == 'Fresh'] to isolate a specific subset of data; comparing fresh produce to canned or frozen goods would introduce skew due to processing costs, so this filtering is statistically essential. Immediately following the filter, we invoke.

sort_values('RetailPrice', ascending=False). This is a critical step for visualization; without sorting the DataFrame before passing it to the plotting library, the resulting chart would display bars in random index order, destroying the ability to quickly rank items. We conclude the chain with.

head(10) to limit our dataset to the top outliers, preventing the chart from becoming overcrowded.

The visualization itself relies on Seaborn’s sns.barplot() function. We explicitly map the quantitative variable RetailPrice to the x-axis and the categorical variable Vegetable to the y-axis. While modern versions of Seaborn can infer orientation based on data types, explicit mapping ensures stability. By setting color='seagreen', we override the default multi-colored palette; in a ranking chart where distinct colors do not represent distinct data groups, using a single uniform color reduces cognitive load and keeps the focus on the length of the bars. Finally, plt.grid(axis='x') is added to improve readability, allowing the eye to trace the end of a bar down to the specific value on the x-axis.

W followed by spinach, mushrooms, and more. The bar chart provides a simple way to determine quantity. The choice of vertical or horizontal is usually based on the preference or the shape of your data.

Seaborn’s barplot function offers extensive customization options to adapt the chart to more complex data stories. While we used color to set a uniform tone, you can use the hue parameter to introduce a second categorical variable, which will split each bar into sub-groups (comparing prices across different years side-by-side). You can also utilize the palette parameter to apply meaningful color maps, such as a diverging palette if your data centers around zero, or a sequential palette to emphasize magnitude. Additionally, because Seaborn is built on top of Matplotlib, you can fine-tune axes using standard commands; for example, if you prefer vertical bars but have tight spacing, you

220 ❘ CHAPTER 10 IllusTRATIng CRoss-sECTIonAl DATA

Top 10 Most Expensive Fresh Vegetables (2022)

Okra

Spinach, boiled

Spinach, eaten raw

Mushrooms, sliced

Mushrooms, whole

Tomatoes, grape, & cherry

Cauliflower florets

Kale

Lettuce, romaine, hearts

Collard greens

0

1

2

3

4

5

Price per Pound ($)

FIGURE 10-4: Bar chart of fresh vegetables.

can use plt.xticks(rotation=45) to angle your labels legibly. Finally, the errorbar parameter (formerly ci) allows you to automatically calculate and display confidence intervals, adding a layer of statistical rigor to your visual comparison.

Boxplots

One of the most misunderstood concepts in data analysis is the average. Averages obscure reality. For example, if you have two people, and one earns nothing and the other earns $100,000, the average salary is $50,000, a number that accurately describes neither person. To truly understand cross-sectional data, you must understand its distribution. You need to know if your data is clustered tightly around the middle (a normal distribution) or if it is skewed by a few (a long-tail distribution).

For example, we can ask whether fresh produce is significantly more volatile in price than canned produce.

To visualize this in Listing 10-5, we use the boxplot. It is a standardized way of displaying data based on a five-number summary: minimum, first quartile (25%), median, third quartile (75%), and maximum.

LISTING 10-5: COMPARING DISTRIBUTIONS WITH BOXPLOTS

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

Correlations and Distributions ❘ 221

# Load the Data

url = "https://github.com/bkrayfield/Applied-Math-With-Python/raw/refs/heads/main/

Data/Vegetable-Prices-2022.csv"

veg_prices = pd.read_csv(url)

plt.figure(figsize=(12, 6))

# We filter out rare forms to keep the chart clean

common_forms = veg_prices[veg_prices['Form'].isin(['Fresh', 'Canned', 'Frozen',

'Dried'])]

sns.boxplot(

data=common_forms,

x='Form',

y='RetailPrice',

palette='coolwarm'

)

plt.title('Price Distributions by Vegetable Form')

plt.xlabel('Form')

plt.ylabel('Retail Price per Pound ($)')

plt.grid(axis='y', linestyle='--', alpha=0.3)

plt.show()

The code in Listing 10-5 delegates significant statistical computation to the sns.boxplot function.

When we pass x='Form' and y='RetailPrice', Seaborn groups the DataFrame by the unique values in the Form column. For each group, it automatically calculates the interquartile range (IQR), which is the distance between the 25th percentile and the 75th percentile. This range forms the

The function then calculates the whiskers (usually 1.5

times the IQR) to determine reasonable boundaries for the data. Any data point existing outside these calculated whiskers is rendered as an individual diamond or dot. This automatic outlier detection is why the boxplot is superior to a bar chart for risk analysis; it visually separates the “normal” variation from the extreme anomalies (like the wildly expensive dried mushrooms) without requiring the user to write manual filtering logic.

which utilizes a boxplot to compare the statistical distribution of retail prices across four vegetable forms: fresh, canned, frozen, and dried.

it is important to understand the specific statistical attributes that define the boxplot’s structure. The box represents the interquartile range (IQR), while the horizontal line within it denotes the median. In Seaborn, these elements are controlled by specific parameters: whis defines the length of the whiskers (commonly set to 1.5 times the IQR), and showfliers determines whether the extreme outliers (the dots seen in the Canned and Frozen categories) are displayed.

Furthermore, you can enhance the comparison using the notch=True attribute, which creates a narrowed area around the median to represent a confidence interval, or showmeans=True to add a distinct marker for the arithmetic average. These coded values allow for a precise fine-tuning of the styles, box widths, and cap lengths to make the volatility in the Fresh category even more visually distinct.

, evidenced by its tall interquartile range (the height of the box) and long whiskers, indicating that fresh produce prices vary

222 ❘ CHAPTER 10 IllusTRATIng CRoss-sECTIonAl DATA

Price Distributions by Vegetable Form

7

6

5

ound ($)

4

rice per P 3

ail PetR

2

1

Fresh

Canned

Frozen

Dried

Form

FIGURE 10-5: Boxplot.

widely from under $1 to over $5 per pound. In contrast, canned vegetables (the gray box in the middle) show a highly compressed distribution with a low median price, suggesting stability and consistency in pricing, though the distinct dots appearing above the whiskers reveal specific outliers, likely specialty items, that break this trend. The Frozen category shows a tighter price spread than fresh produce but contains the single most extreme outlier in the dataset, reaching nearly $7 per pound. Finally, the Dried category does not show outliers and has a median price around $2.

CORRELATIONS IN THE CROSS SECTION

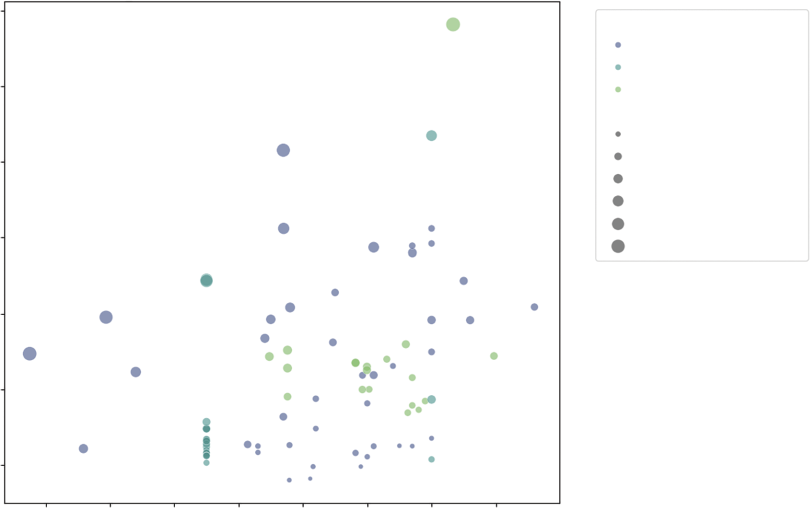

Analyzing cross-sectional data requires more than just looking at individual distributions; it requires an investigation into how different variables interact with one another. To uncover these relationships, we primarily rely on two distinct but complementary visualization techniques. First, we use scatterplots to observe the raw, granular interaction between two or more variables, allowing us to spot nonlinear patterns and individual outliers. Second, we utilize correlation heatmaps to provide a high-level statistical summary of the entire dataset, using color-coded grids to quantify the strength of relationships between all numerical variables at once. By combining these two views, you can move from identifying broad trends to inspecting the specific data points that drive them.

Scatterplots

Cross-sectional data shines when you want to understand how two different variables interact. This is the domain of correlation. In our vegetable dataset, we have a unique variable called Yield. This

Correlations in the Cross section ❘ 223

represents the percentage of the vegetable that is edible (e.g., a yield of 1.0 means you eat the whole thing; 0.5 means half is waste, like peels or seeds).

We might ask: Do vegetables with higher waste (lower yield) per pound? The scatterplot is the primary tool for this investigation. It maps one variable to the x-axis and another to the y-axis.

However, we can enhance this 2D plot to show four dimensions of data by utilizing Color (Hue) to represent the form (Fresh vs. Canned) and Size to represent the cost per cup (the true cost of eating).

Listing 10-6 creates a scatterplot to view the correlation. This listing builds on the vegetable prices data.

LISTING 10-6: MULTIVARIATE SCATTERPLOTS

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

# 1. Load the Data

url = "https://github.com/bkrayfield/Applied-Math-With-Python/raw/refs/heads/main/

Data/Vegetable-Prices-2022.csv"

veg_prices = pd.read_csv(url)

veg_prices = veg_prices[veg_prices['Form'].isin(['Fresh', 'Canned', 'Frozen'])]

# Visualization

plt.figure(figsize=(12, 8))

# We map 4 variables onto one chart:

# 1. x-axis: Yield (Efficiency: 0.0 to 1.0)

# 2. y-axis: RetailPrice (Cost to buy)

# 3. hue: Form (Fresh, Canned, Frozen, etc.)

# 4. size: CupEquivalentPrice (True cost to eat)

sns.scatterplot(

data=veg_prices,

x='Yield',

y='RetailPrice',

hue='Form',

size='CupEquivalentPrice',

sizes=(20, 200), # Control the min and max dot size for readability

alpha=0.6, # Transparency helps when dots overlap

palette='viridis'

)

plt.title('Vegetable Prices: Retail Cost vs. Edible Yield', fontsize=16) plt.xlabel('Yield (1.0 = 100% Edible)', fontsize=16)

plt.ylabel('Retail Price per Pound ($)', fontsize=16)

plt.legend(bbox_to_anchor=(1.05, 1), loc='upper left', fontsize=16) # Move legend outside

plt.tight_layout()

plt.show()

Listing 10-6 demonstrates the declarative power of Seaborn, allowing us to map four distinct dimensions of data onto a single 2D plane without writing complex loops. The hue='Form'

224 ❘ CHAPTER 10 IllusTRATIng CRoss-sECTIonAl DATA

parameter instructs Seaborn to inspect the Form column and automatically assign a distinct color to each category (Fresh, Canned, and Frozen). Simultaneously, the size='CupEquivalentPrice'

parameter maps the calculated serving cost to the physical area of the marker. The sizes=(20, 200) argument is a normalization tuple; it clamps the minimum dot size to 20 pixels and the maximum to 200 pixels, ensuring that cheap items are still visible while expensive items do not obscure the entire plot,

We also introduce the alpha=0.6 parameter. In scatterplots with high data density, points often stack on top of each other (called overplotting). By setting alpha (opacity) to 60%, overlapping points appear darker, revealing density clusters that would otherwise be hidden. Finally, bbox_to_anchor moves the legend outside the plot area, ensuring it doesn’t cover our data points. The resulting chart

rant than Canned items.

tionships between four different attributes of the vegetable dataset. The position of each point is determined by its yield on the x-axis (where 1.0 indicates 100% edible) and its retail price per pound on the y-axis. Furthermore, the chart uses color to distinguish between the vegetable’s form—fresh, canned, or frozen—and size to represent the CupEquivalentPrice, where larger bubbles indicate a higher cost per edible serving. This multi-dimensional view reveals distinct clusters: frozen vegetables Vegetable Prices: Retail Cost vs. Edible Yield

7

Form

Fresh

Canned

6

Frozen

CupEquivalentPrice

0.4

5

0.8

1.2

1.6

ound ($)

2.0

4

2.4

rice per P

ail P 3

etR

2

1

0.4

0.5

0.6

0.7

0.8

0.9

1.0

1.1

Yield (1.0 = 100% Edible)

FIGURE 10-6: Multivariate scatterplot.

Correlations in the Cross section ❘ 225

tend to be high-yield and lower-priced, while fresh vegetables show much greater variability across both yield and price, with several large bubbles indicating a high true cost to eat despite a moderate retail price.

Seaborn’s scatterplot function provides further customization attributes to handle complex data relationships. Beyond hue and size, you can utilize the style parameter to map a categorical variable to the shape of the markers (e.g., squares for one category, circles for another), which is particularly useful for printing in black and white. The markers argument works in tandem with style to define exactly which symbols to use. For aesthetic refinement, edgecolor and linewidth allow you to add borders to your points, helping them stand out against the background. Additionally, if you are plotting a very large dataset where overplotting makes points indistinguishable, you can switch from a scatterplot to a joint plot (using sns.jointplot), which adds histograms or density curves to the margins of the chart to visualize the distribution of each variable independently.

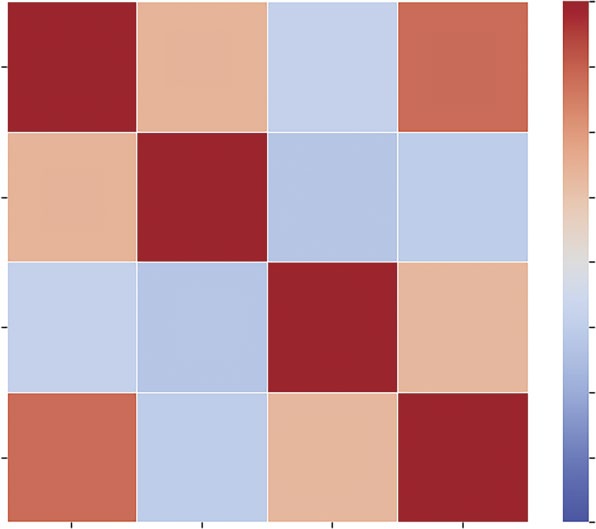

Correlation Heatmaps

When you have a dataset with many numeric variables, plotting a dozen scatterplots to check for relationships is inefficient. You need a summary view, which provides a way to scan the entire dataset for connections at a single glance. For this, you can use a correlation heatmap.

A heatmap replaces numbers with colors. It visualizes the correlation matrix, a table where every variable is compared to every other variable using the Pearson correlation coefficient. This coefficient ranges from a perfect positive correlation to a perfect negative correlation, indicating no relationship.

In our vegetable data, we have RetailPrice, Yield, CupEquivalentSize, and CupEquivalentPrice. How do these metrics relate? Does a larger serving size imply a higher price?

We can investigate these questions using a heatmap in Listing 10-7.

LISTING 10-7: THE CORRELATION HEATMAP

# 1. Prepare the Data

# Select only numeric columns for correlation calculation

numeric_cols = ['RetailPrice', 'Yield', 'CupEquivalentSize', 'CupEquivalentPrice']

correlation_matrix = veg_prices[numeric_cols].corr()

# 2. Visualization

plt.figure(figsize=(8, 6))

# Create the Heatmap

sns.heatmap(

correlation_matrix,

annot=True, # Write the data value in each cell

fmt=".2f", # Format to 2 decimal places

cmap='coolwarm', # Blue (negative) to Red (positive)

vmin=-1, vmax=1, # Anchor the colormap range

linewidths=0.5, # Space between cells

square=True # Force cells to be square

)

226 ❘ CHAPTER 10 IllusTRATIng CRoss-sECTIonAl DATA

plt.title('Correlation Matrix of Vegetable Metrics', fontsize=14)

plt.show()

Listing 10-7 begins by filtering the DataFrame to strictly numeric columns; attempting to run a correlation on text data (like vegetable names) will result in an error. We then call the.corr() method on this subset. This is a pure statistical operation that returns a new DataFrame where the indices and columns are identical and the values represent the Pearson coefficient. The resulting visu-

The visualization is handled by sns.heatmap. The cmap='coolwarm' argument is crucial here. It utilizes a diverging colormap, where distinct colors represent the extremes (blue/light for −1 and red/

dark for +1). A neutral color (white or gray) represents the middle (0). This allows the analyst to instantly spot strong relationships. We set vmin=-1 and vmax=1 to anchor the color scale; without this, the colors would scale relative to the data (e.g., the darkest shade might only represent 0.5), Correlation Matrix of Vegetable Metrics

1.00

RetailPrice

1.00

0.35

–0.21

0.70

0.75

0.50

Yield

0.35

1.00

–0.31

–0.26

0.25

0.00

CupEquivalentSize

–0.21

–0.31

1.00

0.32

–0.25

–0.50

CupEquivalentPrice

0.70

–0.26

0.32

1.00

–0.75

–1.00

e

rice

ieldY

rice

ailPet

alentSiz

R

alentP

CupEquiv

CupEquiv

FIGURE 10-7: Stacked bar chart.

Correlations in the Cross section ❘ 227

which could be misleading. Finally, annot=True overlays the actual correlation coefficients onto the squares, combining the visual intuition of the colors with the statistical precision of the numbers.

The result allows you to instantly see, for example, if Yield has a negative correlation with RetailPrice. The color intensity serves as a signal of the strength and direction of the relationship—

dark squares indicate a positive correlation (as one number goes up, the other goes up), while light squares indicate a negative correlation. The strongest relationship, marked by the darkest square, is between RetailPrice and CupEquivalentPrice, confirming that vegetables with a higher price per pound generally translate to a higher cost per edible serving. Conversely, the light squares (such as Yield vs. CupEquivalentSize) highlight weak inverse relationships, suggesting that larger or more efficient vegetables do not necessarily correlate with higher prices.

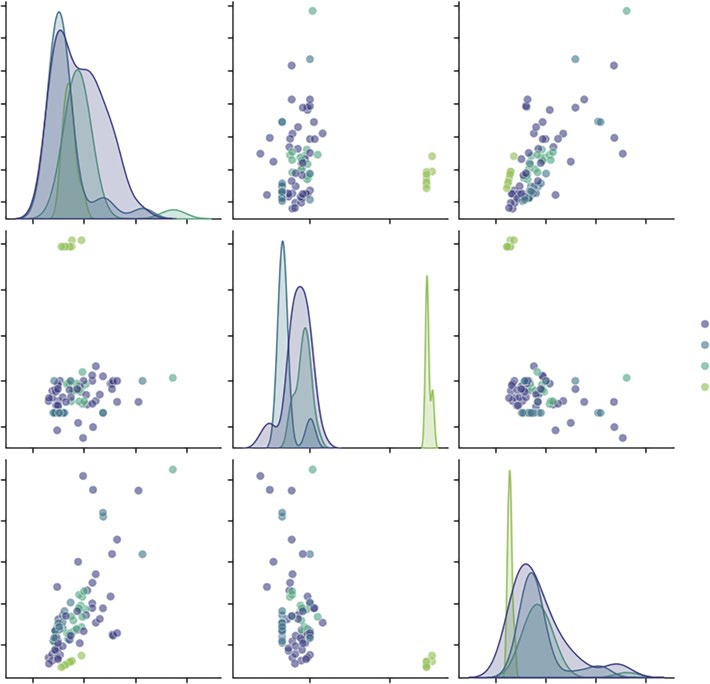

The Pair Plot

In the previous section, we used a heatmap to find correlations. However, a single number, like a correlation coefficient, can be misleading. It tells you two things are related, but it doesn’t tell you how. Is the relationship a straight line? Is it a curve? Is it driven entirely by three massive outliers?

To answer this, you need to see the raw data. Plotting every combination of variables manually (price vs. yield, price vs. size, and yield vs. size) is tedious. The pair plot automates this. It constructs a grid of charts where the diagonal shows the distribution of a single variable (histogram or kernel density estimate), and the off-diagonal cells show the relationship between two variables (scatterplot).

This visualization allows you to absorb the entire structure of your dataset in seconds.

Listing 10-8 utilizes sns.pairplot, one of the most powerful functions in the Seaborn library. By passing the hue='Form' argument, we instruct the function to not only plot the data but to segment it by category.

LISTING 10-8: GENERATING A SCATTER MATRIX WITH SEABORN

# 1. Prepare the Data

# We select the numeric metrics and one categorical column ('Form') for coloring cols_to_plot = ['RetailPrice', 'Yield', 'CupEquivalentPrice', 'Form']

subset = veg_prices[cols_to_plot]

# 2. Visualization

# sns.pairplot automatically detects numeric vs. categorical data

sns.pairplot(

subset,

hue='Form', # Color the dots/lines by the Vegetable Form

palette='viridis', # Use a distinct color scheme

height=2.5, # Size of each small subplot

plot_kws={'alpha': 0.6} # Make dots slightly transparent

)

plt.suptitle('Pairwise Relationships in Vegetable Data', y=1.02, fontsize=16) plt.show()

228 ❘ CHAPTER 10 IllusTRATIng CRoss-sECTIonAl DATA

These plots include:

➤

The diagonals: Instead of scatterplots, these show the distribution of each variable. We can instantly see that RetailPrice has a long tail (a few very expensive items), while Yield is bi-modal (items are either very efficient or very wasteful, with few in between).

➤

The scatterplots: We can spot the relationships. For example, looking at the intersection of Yield and RetailPrice, we might see that low-yield items (like corn on the cob) tend to have lower retail prices per pound, effectively balancing out the cost to the consumer.

➤

The clusters: The colors reveal if certain forms behave differently. We might see that frozen vegetables cluster tightly in a specific price/yield range, while fresh vegetables are scattered all over the map.

Pairwise Relationships in Vegetable Data

7

6

5

rice 4

ailPetR 3

2

1

2.5

2.0

Form

Fresh

ield 1.5

Y

Canned

Frozen

1.0

Dried

0.5

2.5

rice 2.0

alentP 1.5

1.0

CupEquiv 0.5

0.0

2.5

5.0

7.5

1

2

0

1

2

3

RetailPrice

Yield

CupEquivalentPrice

FIGURE 10-8: The pair plot.

summary ❘ 229

This one command effectively replaces a dozen individual chart queries, making it the ideal starting point for any cross-sectional analysis.

Beyond basic coloring with the hue parameter, sns.pairplot offers extensive control over its grid through specialized keyword arguments. While the plot_kws parameter applies styling (like transpar-ency or point size) to every scatterplot in the grid, you can use diag_kws to specifically modify the diagonal charts—for instance, by changing a kernel density estimate (KDE) to a histogram or adjusting the line thickness. For even more granularity, the vars parameter allows you to limit the plot to specific columns, preventing the grid from becoming overwhelming in large datasets. Furthermore, the kind parameter can transform the off-diagonal plots from standard scatterplots into regression plots (kind='reg'), which automatically adds a line of best fit to help visualize trends across vegetable forms.

T

TABLE 10-1: Pair Plot Customization Options

PARAMETER

FUNCTION

EXAMPLE

kind

Changes the off-diagonal

'reg' for regression lines;

plot type.

'hist' for 2D histograms.

diag_kind

Changes the diagonal

'kde' for smooth curves;

plot type.

'hist' for bars.

markers

Assigns different shapes to

markers=["o", "s", "D"]

categories.

for Fresh, Canned, Frozen.

corner

Removes the redundant

corner=True to create a

upper triangle.

cleaner, triangular grid.

diag_kws

Dictionary of properties for

{'fill': True} to color

diagonal plots.

under the KDE curve.

SUMMARY

This chapter shifted the analytical focus from the moving timeline of history to the static, high-resolution snapshot of cross-sectional analysis. It established that while time-series ask how did we get here, cross-sectional analysis indicates where we are right now. It does this by examining structure, rank, and relationship at a single distinct moment.

The chapter began by explaining the hierarchy of comparison using bar charts, noting that horizontal orientation and sorting are essential for readability when dealing with long category names or ranking tasks.

230 ❘ CHAPTER 10 IllusTRATIng CRoss-sECTIonAl DATA It then addressed the challenge of visualizing composition. While acknowledging the popularity of pie charts for showing part-to-whole relationships, it explored the cognitive difficulties of comparing angles versus lengths. The chapter introduced the donut chart as a clearer circular alternative and the stacked bar chart as a space-efficient, linear solution for comparing proportions.

Finally, this chapter explored techniques for revealing hidden patterns and distributions. It utilized multivariate scatterplots to map up to four dimensions of data—such as x, y, color, and size—onto a single plane. We then scaled this analysis up using correlation heatmaps to instantly scan for positive and negative relationships across all numeric variables, and pair plots to visualize the structure of those relationships. The chapter concluded by using boxplots to move beyond simple averages, allowing us to visualize volatility, spread, and outliers within our categorical groups.

CONTINUE YOUR LEARNING

Cross-sectional visualization is the most common form of business reporting. To master these charts, you must become comfortable with the specific arguments and formatting options within the Seaborn and Matplotlib libraries. The following resources and reference table will help you deepen your understanding of the tools introduced in this chapter:

➤

Seaborn Categorical Data: Detailed guides on bar charts, boxplots, and violin plots.

➤

Matplotlib Pie Charts: The official documentation for creating and customizing pie and donut charts.

➤

Seaborn Distribution Plots: Learn more about pair plots and complex distribution visualizations.

Essential Cross-sectional Functions

T

relationships in this chapter.

TABLE 10-2: Key Summary Statistics Functions

STATISTIC

FUNCTION

DESCRIPTION

.sort_values()

Pandas

Sorts the DataFrame. Essential before

plotting bar charts to create a readable

staircase effect.

sns.

Seaborn

Creates a bar chart. Using horizontal

barplot(orient='h')

orientation helps read long category

labels.

Continue Your learning ❘ 231

STATISTIC

FUNCTION

DESCRIPTION

plt.pie()

Matplotlib

Generates a circular composition chart.

Requires a single array of values rather

than X/Y coordinates.

plt.Circle()

Matplotlib

Used to draw a white circle over a pie

chart to create a donut chart aesthetic.

plt.barh(left=...)

Matplotlib

Creates horizontal bars. By calculating

the left parameter, we can chain bars

together to create stacked bar charts.

sns.

Seaborn

Plots relationships between two vari-

scatterplot(hue=,

ables, adding color (hue) and bubble

size=)

size (size) for extra dimensions.

.corr()

Pandas

Calculates the Pearson correlation

coefficient matrix for all numeric col-

umns in a DataFrame.

sns.heatmap()

Seaborn

Visualizes a correlation matrix using

color intensity to show relationship

strength.

sns.pairplot()

Seaborn

Generates a grid of scatterplots and

histograms to visualize every variable

against every other variable.

sns.boxplot()

Seaborn

Visualizes the distribution, median,

and outliers of data based on the

five-number summary.

11

Illustrating Alternative

Data Types

In traditional econometrics and financial analysis, data is almost exclusively structured. It arrives in neat, tabular formats, rows of observations and columns of variables, ready for immediate ingestion by statistical software. However, the proliferation of digital footprints has given rise to alternative data: information derived from non-traditional sources that acts as a proxy for economic or behavioral activity.

Alternative data includes satellite imagery tracking retail parking lots, credit card transaction logs, social media sentiment, web scraping of product prices, and blockchain ledgers. The defining characteristic of this data is that it is often unstructured or semi-structured. It does not fit naturally into an X-Y plane.

The challenge of illustrating alternative data is one of abstraction. We cannot simply plot the raw data; we must first transform qualitative signals (words, locations, links) into quantitative geometry. This chapter explores the distinct visualization grammars required for text, space, and networks.

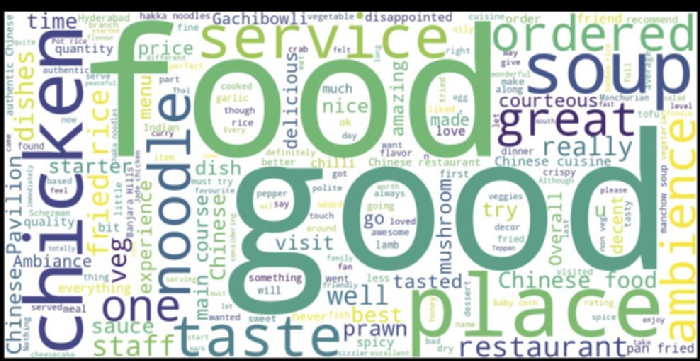

TEXTUAL ANALYSIS

The previous chapters dealt with structured data. This is information that fits neatly into rows and columns, prices, dates, quantities, and coordinates. It is numerical, sortable, and ready for calculation.

Text, however, is the largest source of unstructured data available to the modern analyst. It is messy, subjective, and highly variable. A single sentiment (e.g., “This food is bad”) can be expressed in thousands of different ways (“gross,” “inedible,” “yuck,” “not my favorite”). A spreadsheet cannot natively sum these up.

To visualize text, we must first perform tokenization. This is the process of breaking a stream of natural language into measurable units, known as tokens (usually individual words). Once the

234 ❘ CHAPTER 11 IllusTRATIng AlTERnATIvE DATA TyPEs text is broken into tokens, we can count them, measure their sentiment, and map their relationships.

We essentially transform qualitative human language into quantitative data.

Before we visualize, we must understand our source material. In this chapter, we analyze a dataset titled Restaurant reviews.csv.

This dataset is a classic example of alternative data. While a restaurant’s financial ledger tells you how much money they made, it doesn’t tell you why. The review data—specifically the unstructured text written by customers—contains the answer. It holds the why behind the revenue.

This dataset contains three critical columns:

➤

Restaurant: The entity being reviewed.

➤

Review: The unstructured text we want to analyze.

➤

Time: The timestamp, allowing us to track changes over history.

The first step is always inspection. We never start analyzing data blindly. We must load the file and print the first few rows to verify that the data was read correctly, check for missing values, and understand the column names. The following code snippet will open and show the first few rows of data: import pandas as pd # Load the dataset

url = "https://github.com/bkrayfield/Applied-Math-With-Python/raw/refs/heads/

main/Data/Restaurant%20reviews.csv" df = pd.read_csv(url)

# Display the first few rows to understand the structure print(df.head()) Running this code reveals the tabular structure of our data. You will see a review column filled with sentences like “The chicken was dry” or “Great service!”